The fundamental building block of linear algebra is vectors

- Therefore, it is of utmost importance to understand the true nature of a vector

The true nature of vectors is very abstract

- We will explore that aspect in a later section

- For now, we will understand it through a representation of vectors that can be easily visualized

¶ Arrow Vectors

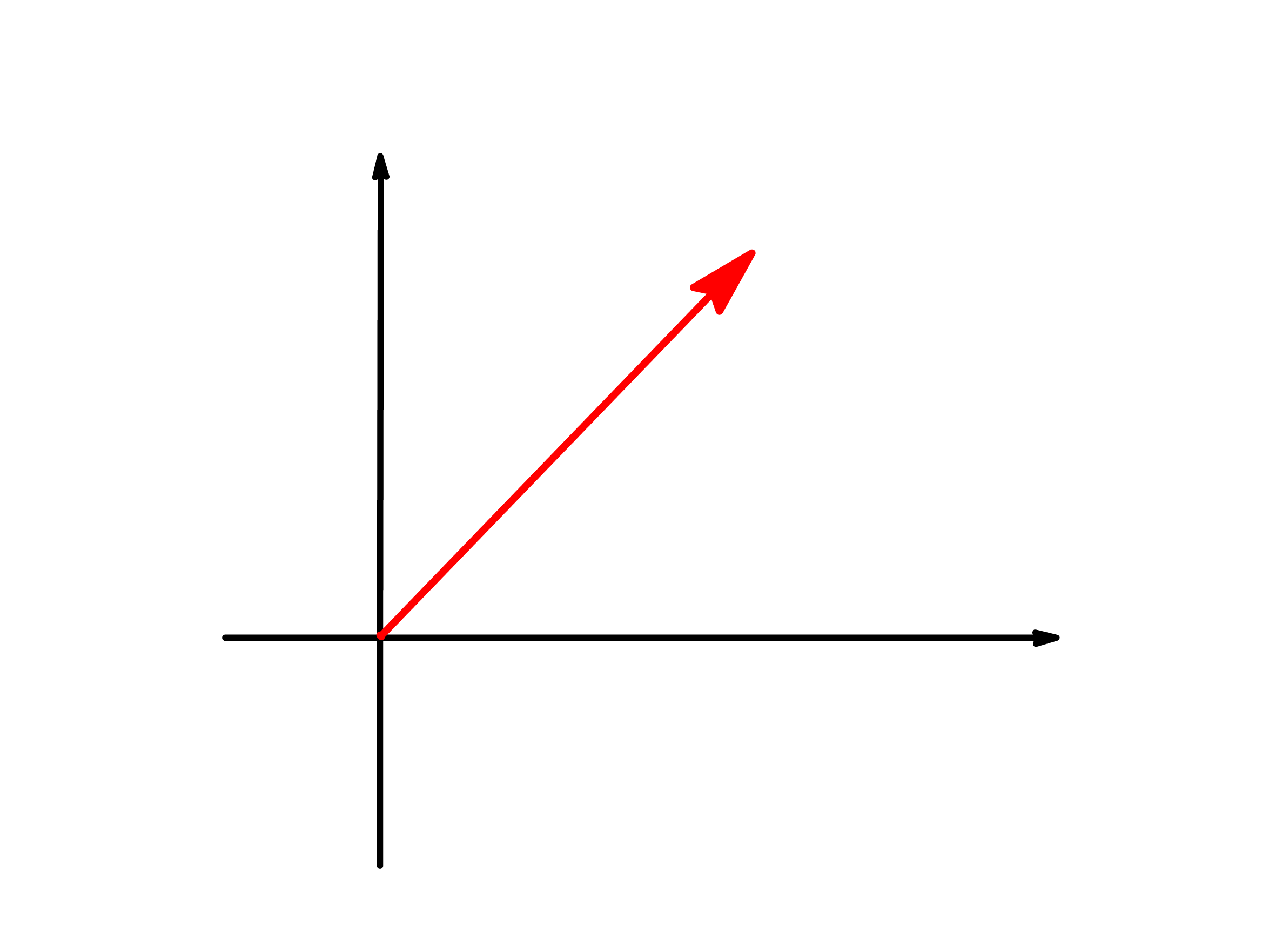

A vector can be represented by an arrow that is pointing from the origin

An arrow vector has two properties

- The length of the arrow vector

- The orientation of the arrow vector

When we want to indicate that a symbol represents an arrow vector, we will put an arrow above said symbol

- This style of notation is only relevant when we want to emphasize that the vector should be treated as arrows

- In later chapters, when we abstractify vectors, we will use a more generalized notation

Vectors are one-dimensional entity

- Despite that, they can inhabit spaces of any dimensions

- It should also go without saying that vectors can only produce or engage with vectors that exist in the same dimension

A vector must have two basic operations

- Vector Addition

- Scalar Multiplication

¶ Vector Addition

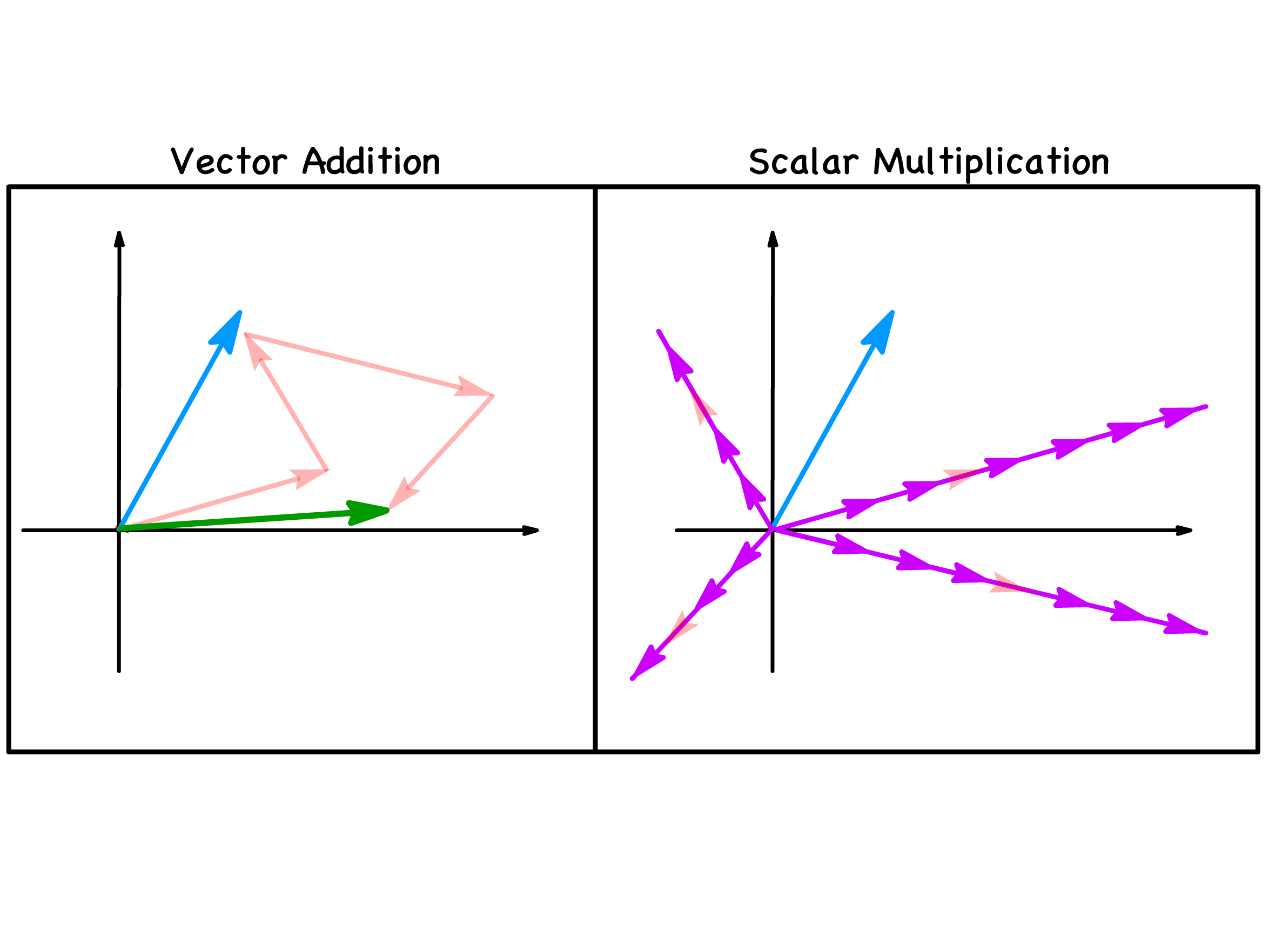

Vector addition is the operation of adding two or more vectors together

- This operation will yield a new vector

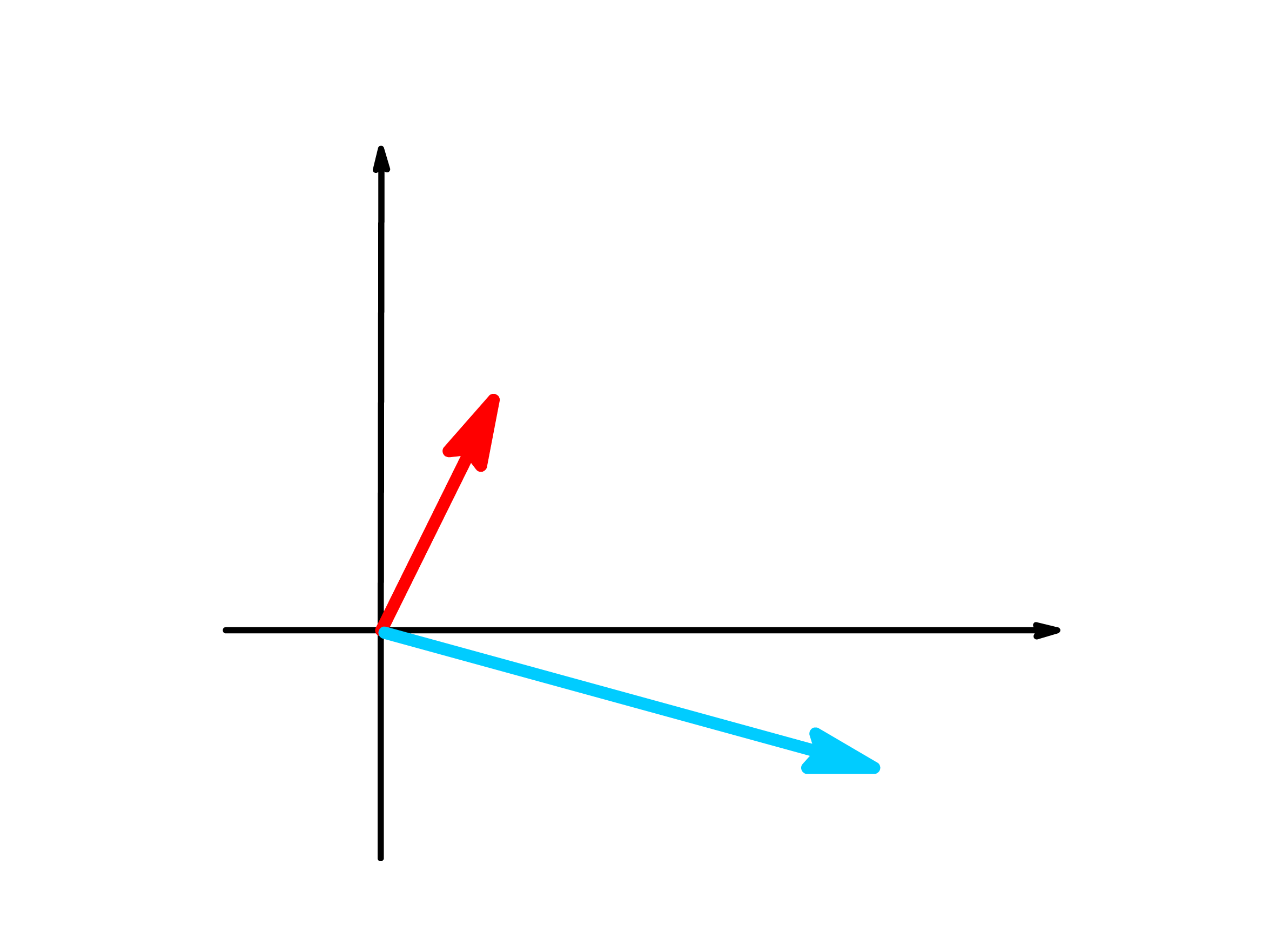

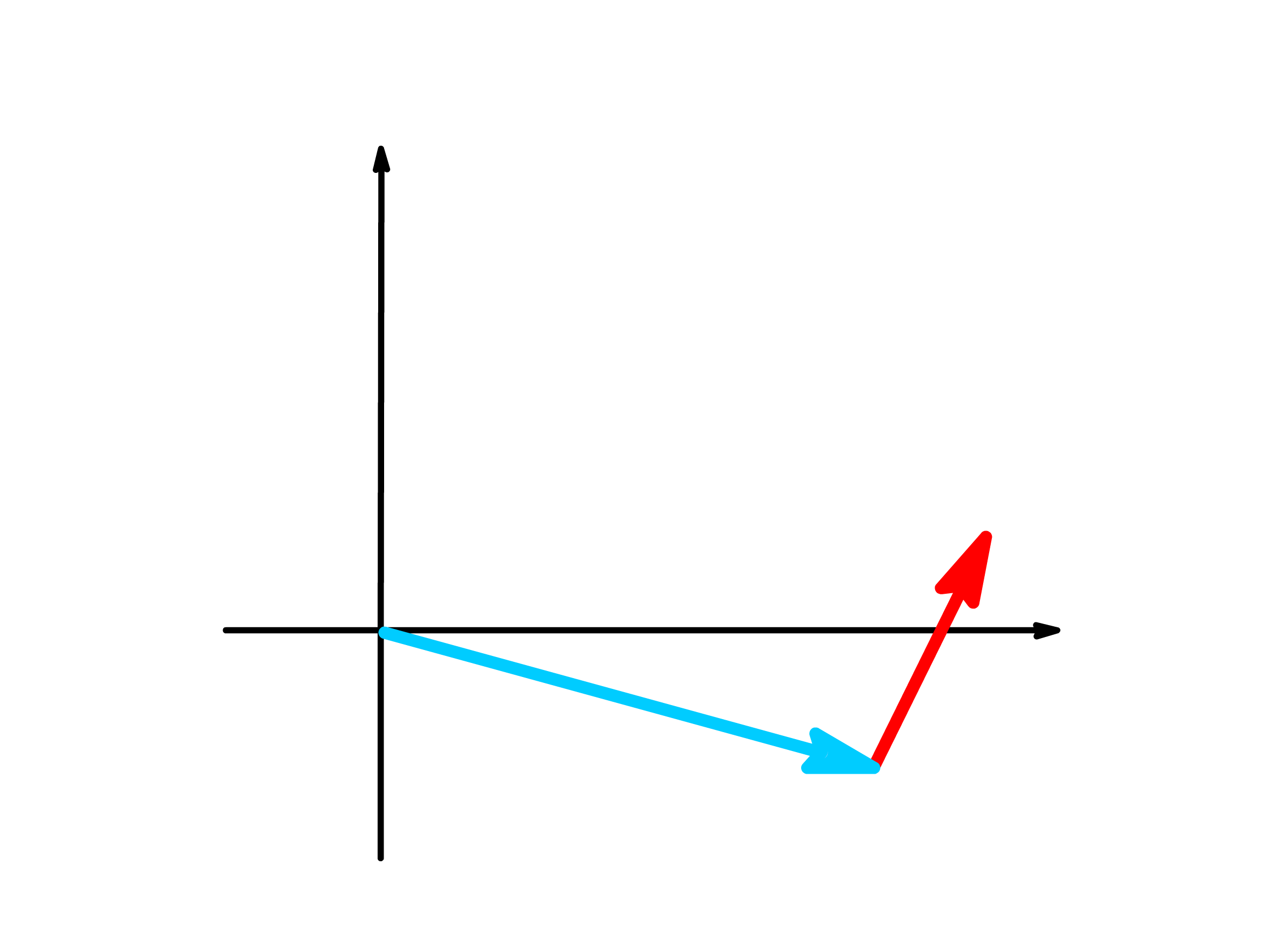

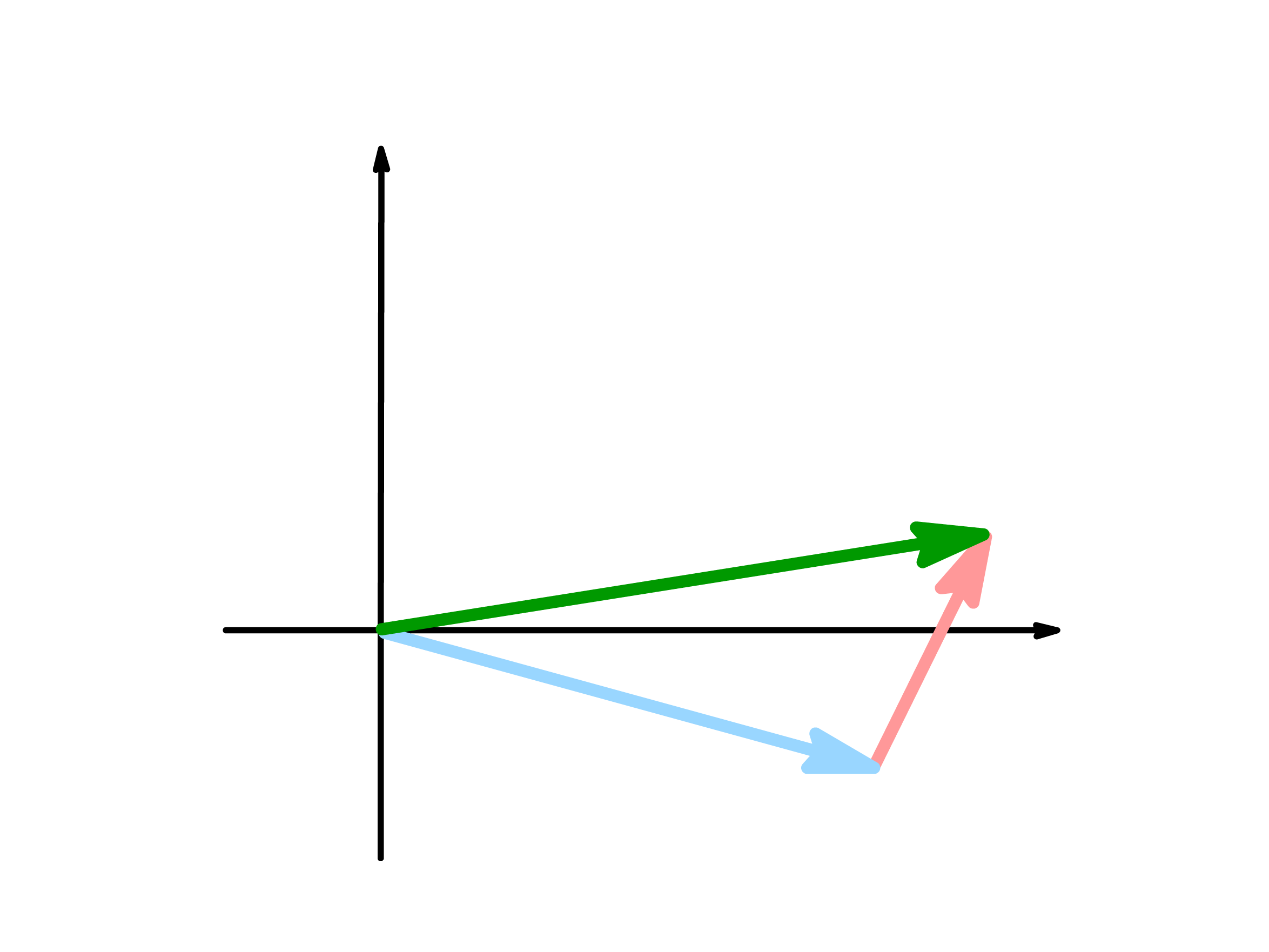

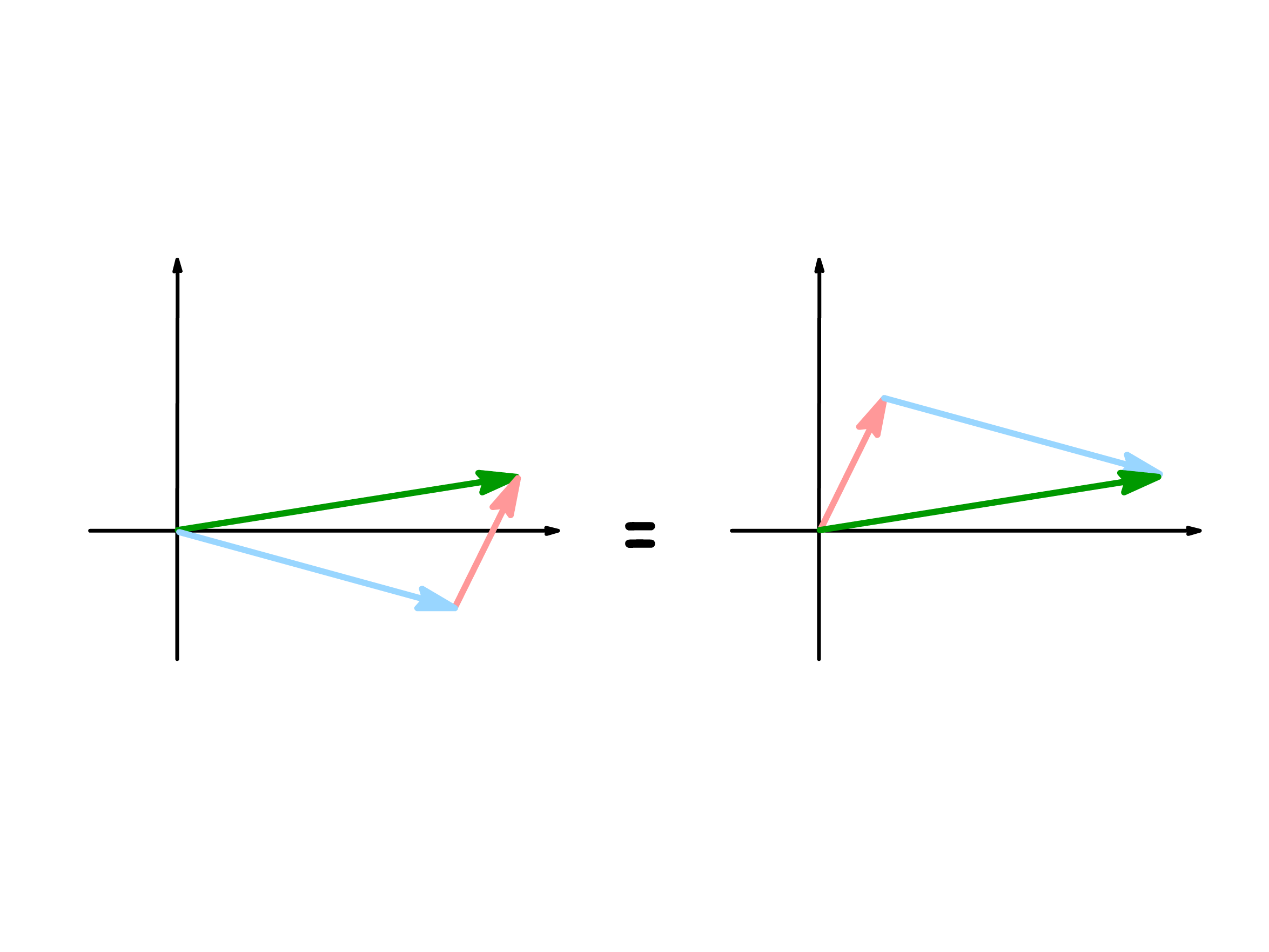

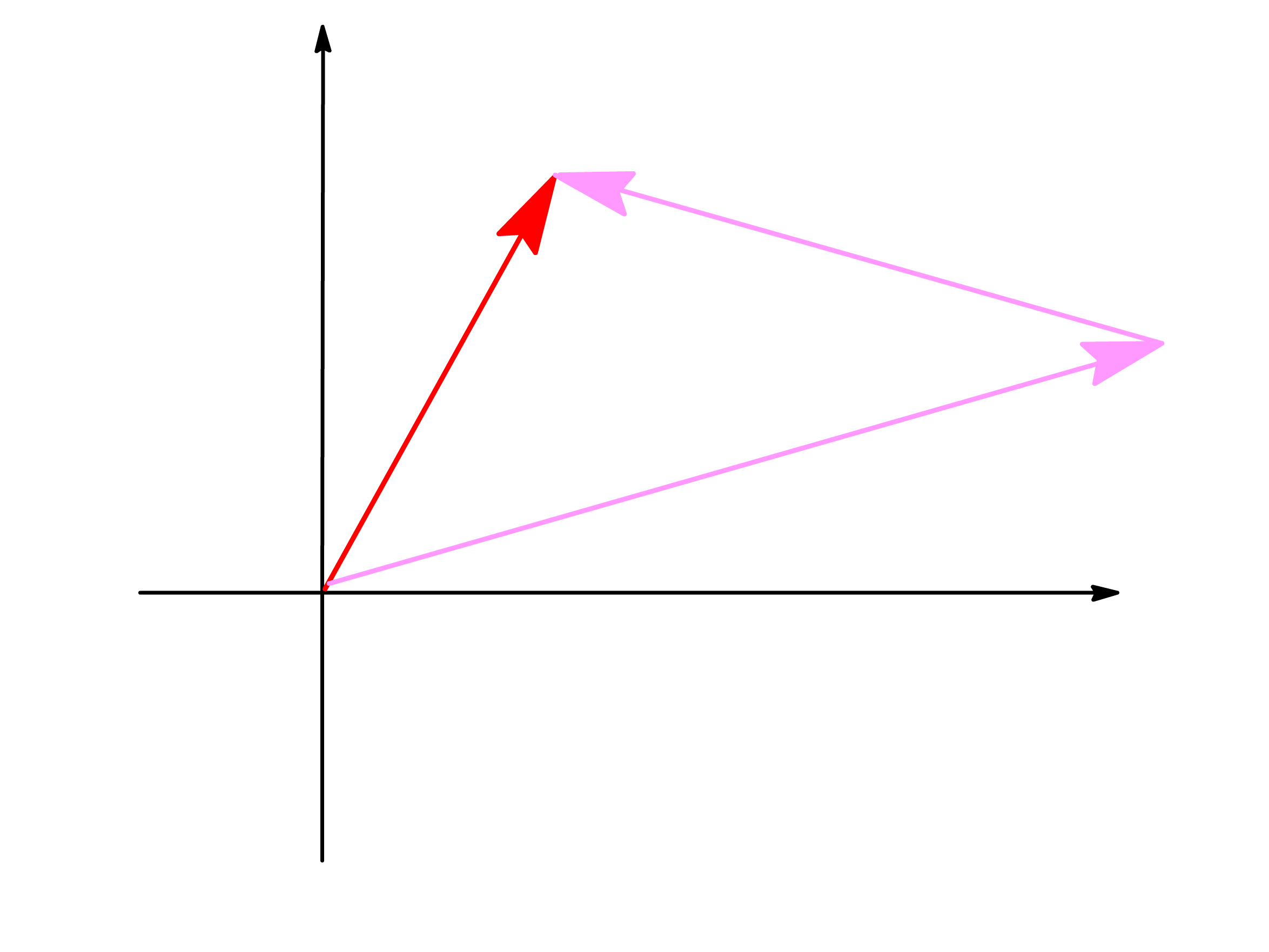

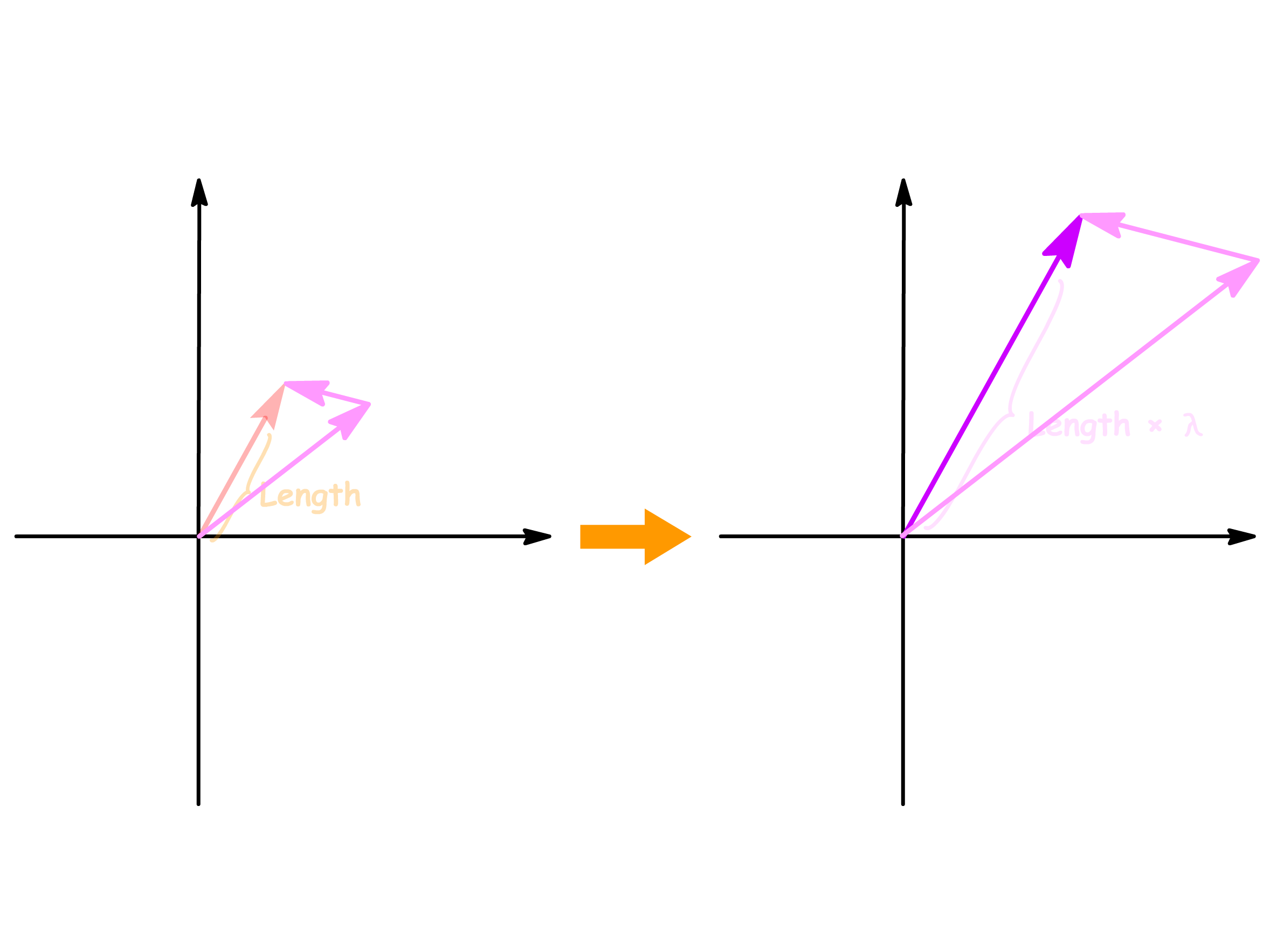

We use the head-to-tail method to determine the vector sum

- Suppose we want to add two arbitrary vectors

- We then place the tail of the one of the vector at the head of the the other vector

- We then draw an arrow from the tail of the first vector to the head of the last vector

The way to think about vector addition like this is to interpret each vector as describing the length and direction of a step we need to take

- The new vector we get via vector addition is the overall effect of following the steps described by each vector

Vector addition and subtraction is commutative, meaning that the new vector we get is indifferent to the order of operation

- This can easily be verified by showing that which vector's tail is touching which vector's head is irrelevant

¶ Scalar Multiplication

Scalar multiplication is the operation of multiplying a vector by a number

- This operation will yield a new vector

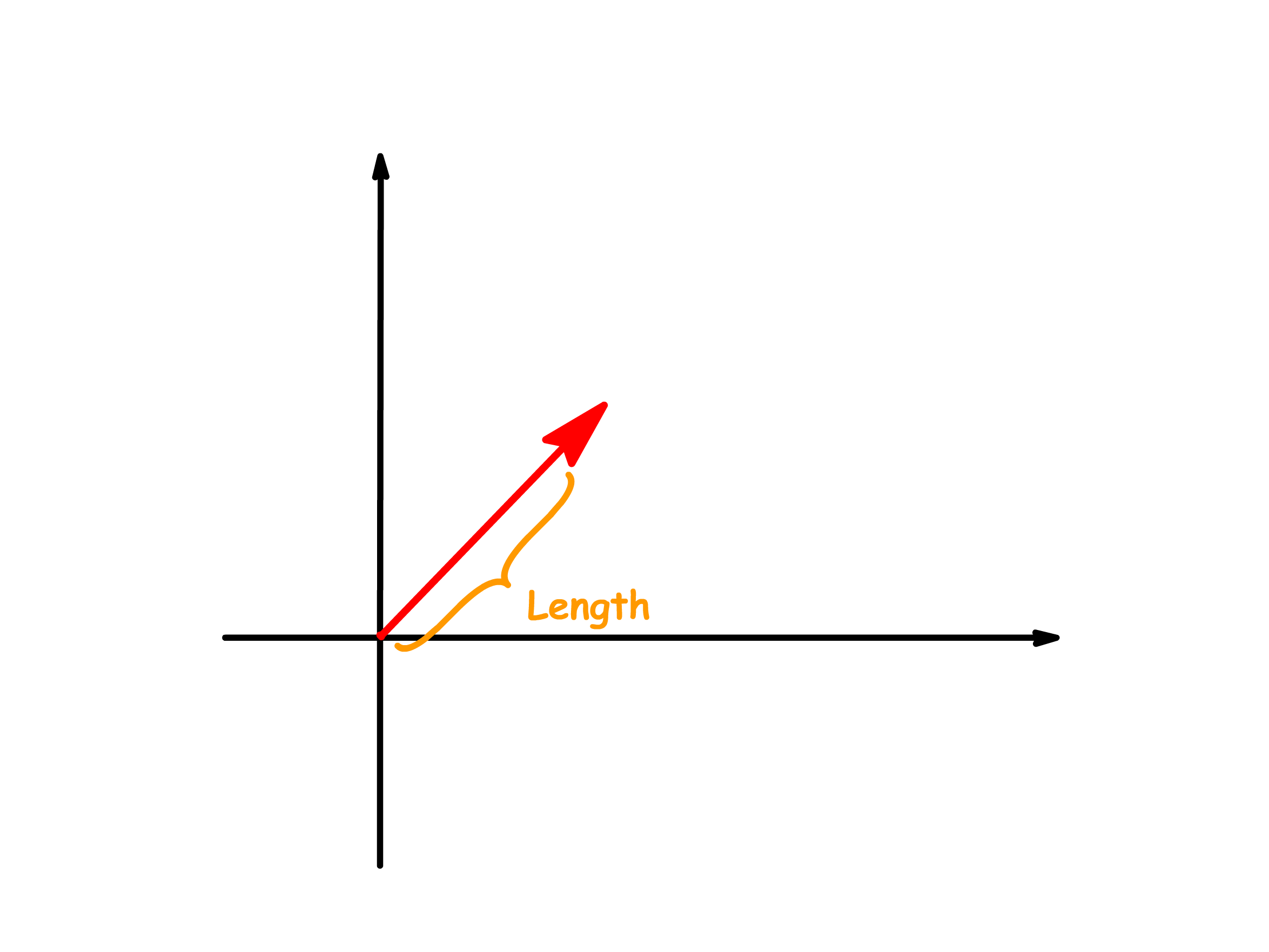

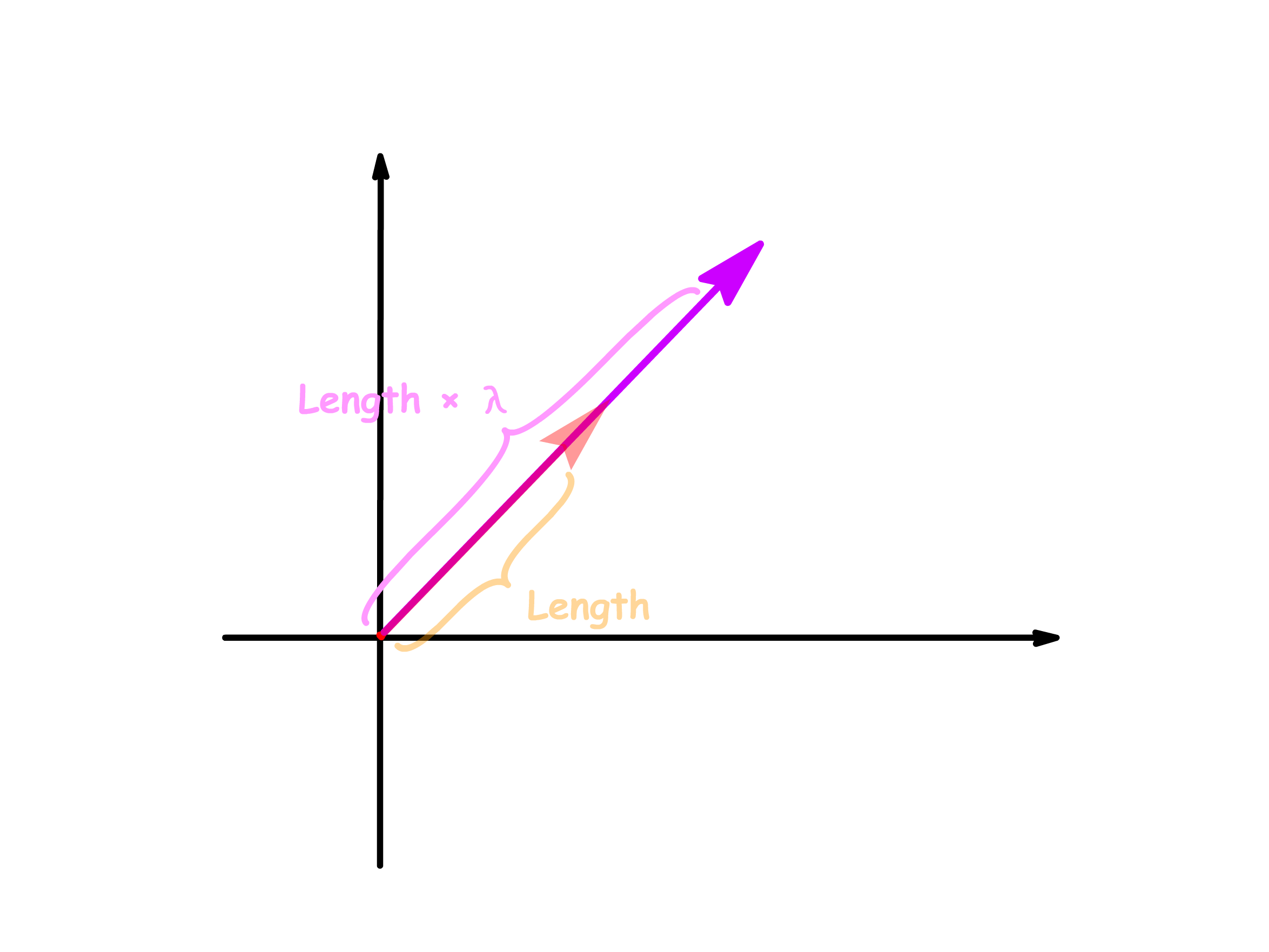

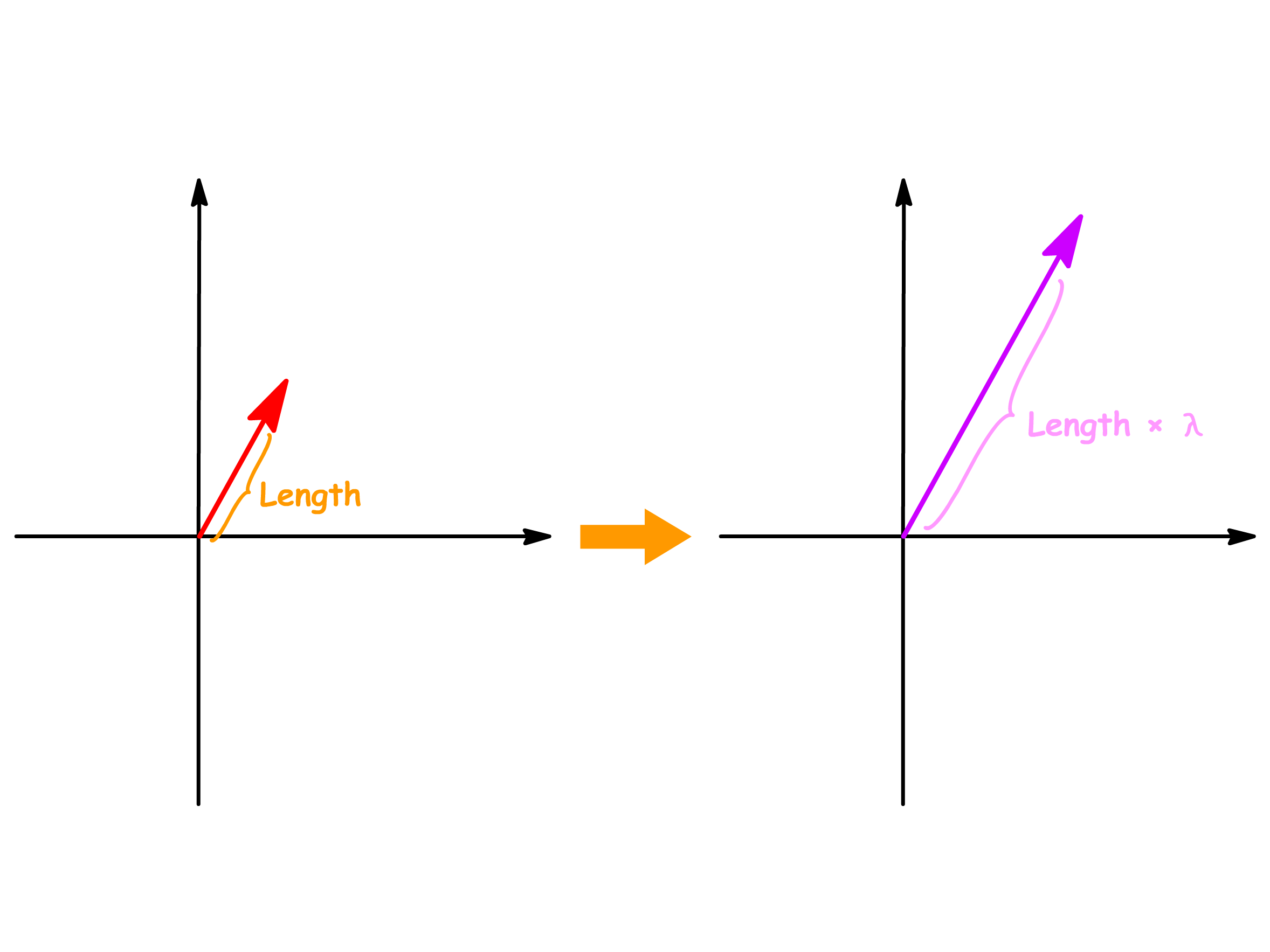

We can determine the scaled vector by examining how the length of the vector changes

- We first determine the length of the vector we are interested in

- We then multiply the length of the vector by the number to get a new length

- We then produce a new vector that has the new length, but the same orientation

When we multiply a vector by a number, we are scaling the vectors by that number

- We therefore call the numbers that scale the vectors as scalars

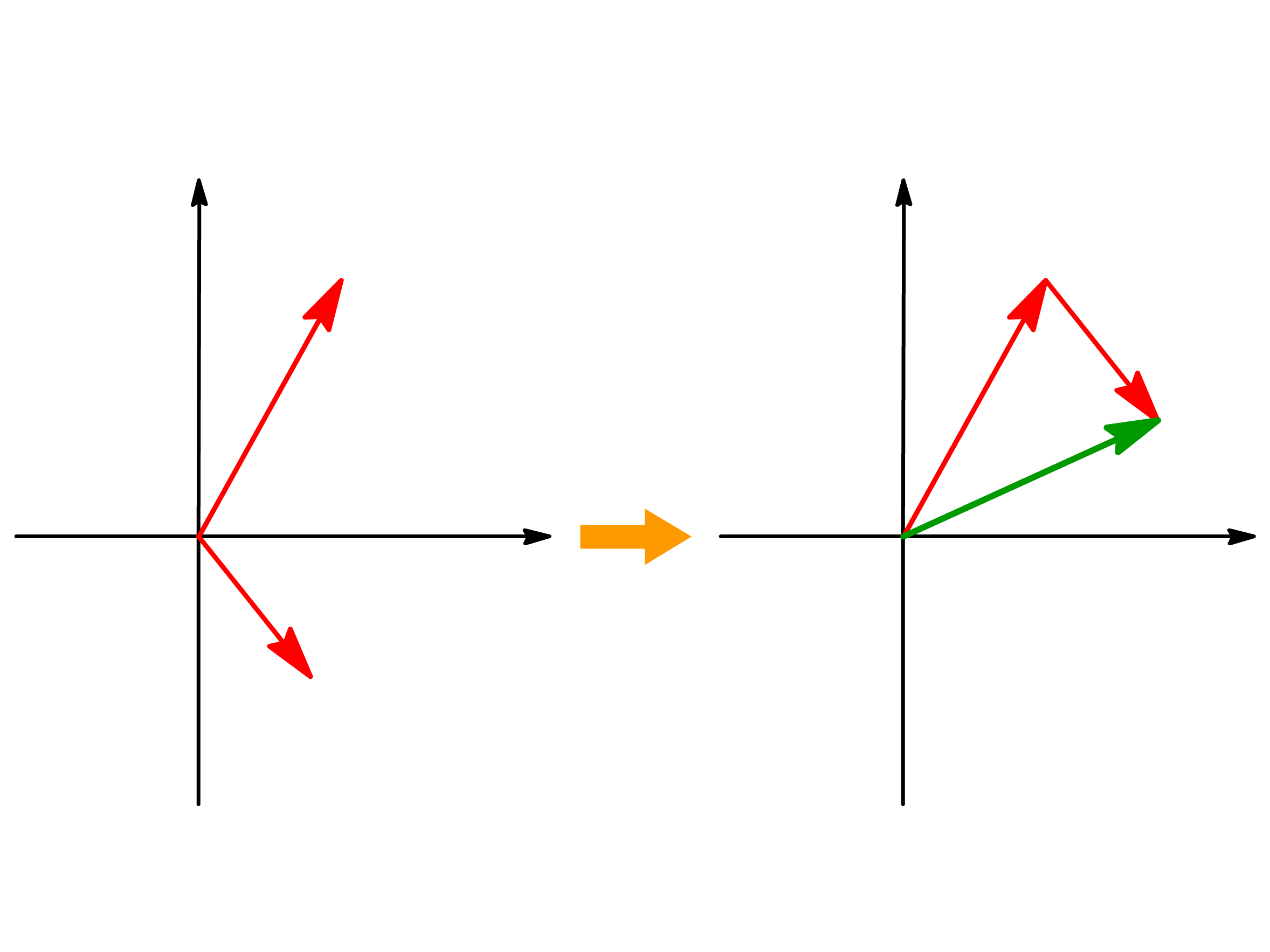

There are two aspects to scalar multiplication

- The sign of the scalar determines whether the direction of the new vector is reversed

- If the scalar is positive, the direction of the new vector remains unchanged

- If the scalar is negative, the direction of the new vector is reversed

- The magnitude of the scalar determines the length of the new vector

- If the magnitude of the scalar is greater than 1, then the vector is stretched

- If the magnitude of the scalar is between 0 and 1, then the vector is squished

- If the magnitude of the scalar is exactly 1, then the length of the vector remains unchanged

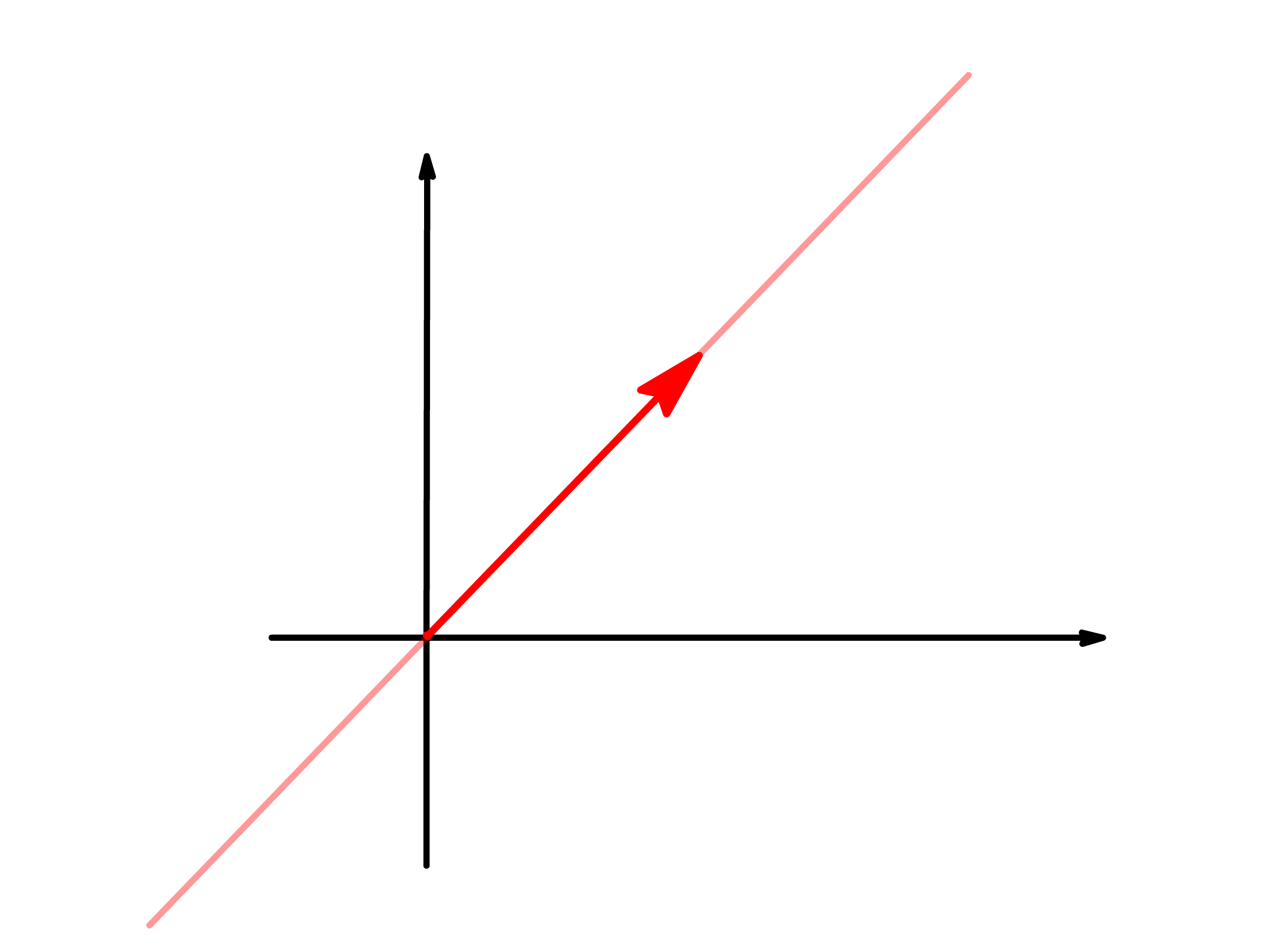

It should be noted that although scalar multiplication can change the direction and length of the vector, the vector will always remain on the same line

- We can reach every point on this line through scalar multiplication alone, but anywhere beyond the line is unreachable simply by scaling the vector

¶ Linearity

¶ Linear Combination

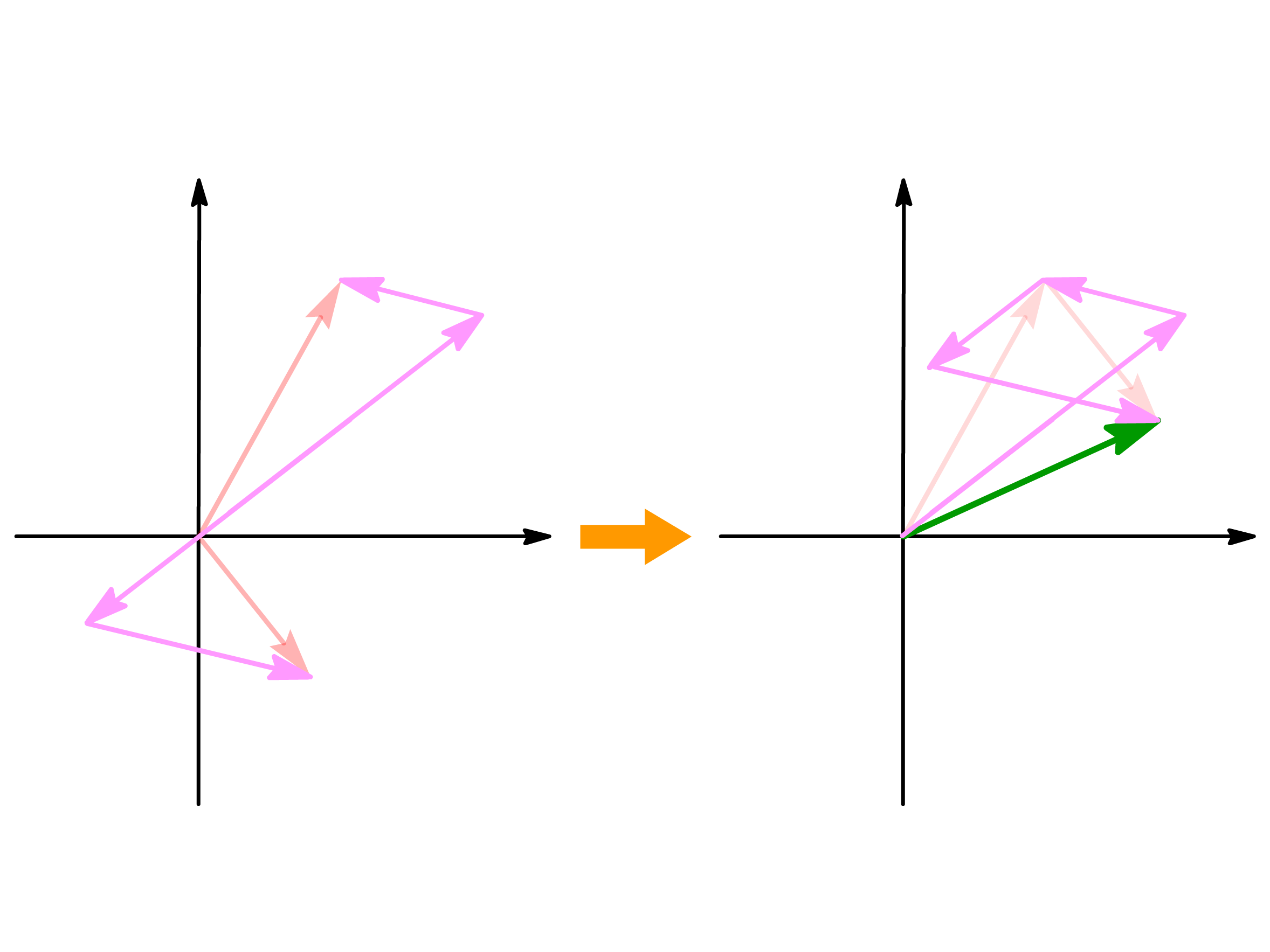

Both vector addition and scalar multiplication are operations that allow us to produce new vectors. However, each individual operation alone is usually inadequate to obtain a chosen target vector

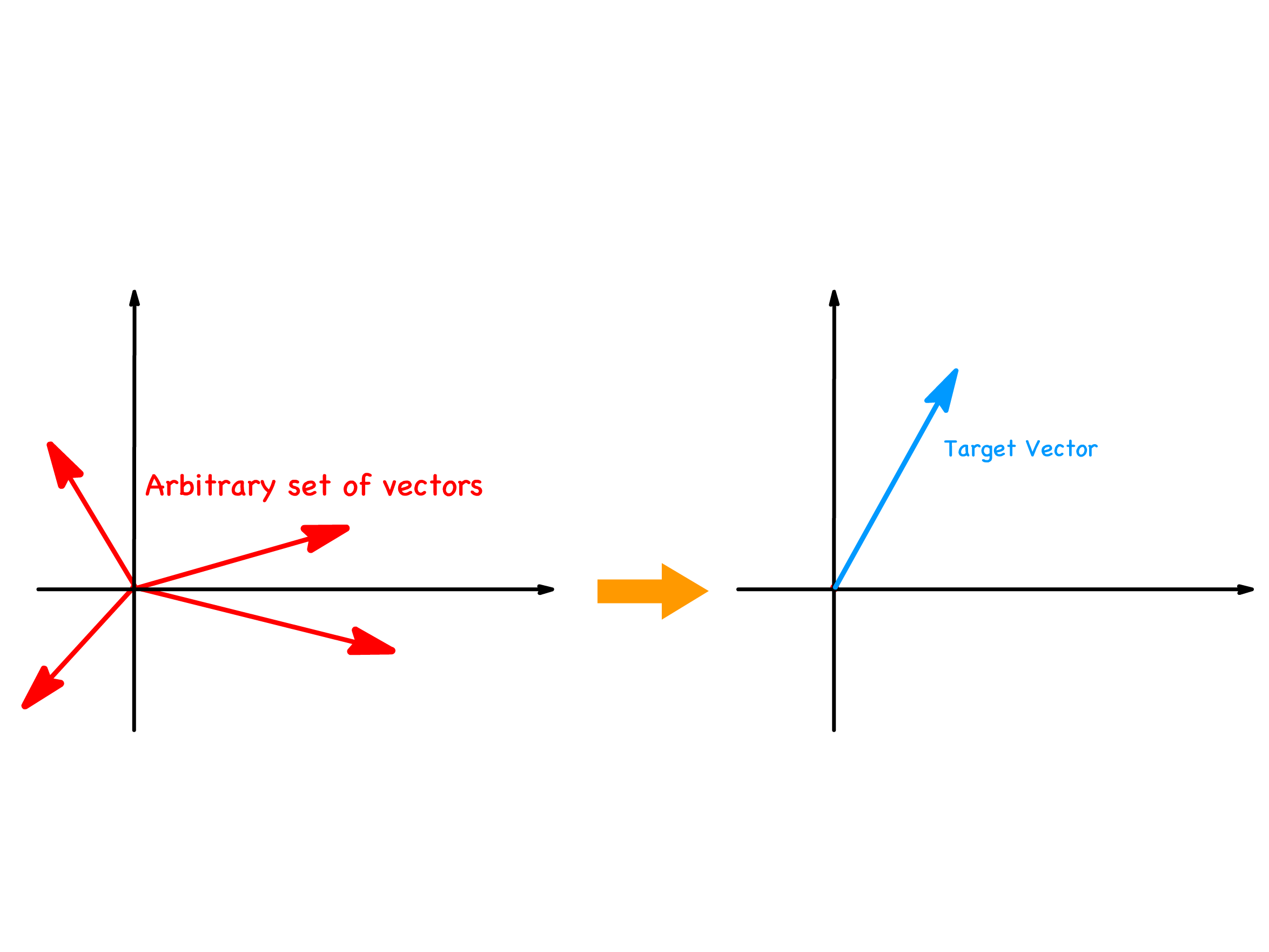

Suppose we want to produce a target vector from a set of arbitrary vectors using the basic vector operations

It is unlikely that our desired vector will just happen to be the vector sum of that set of vectors or the scalar multiple of one of those vectors

However, if we were to make use of both operations simultaneously, our freedom to manipulate these vectors will become a lot higher

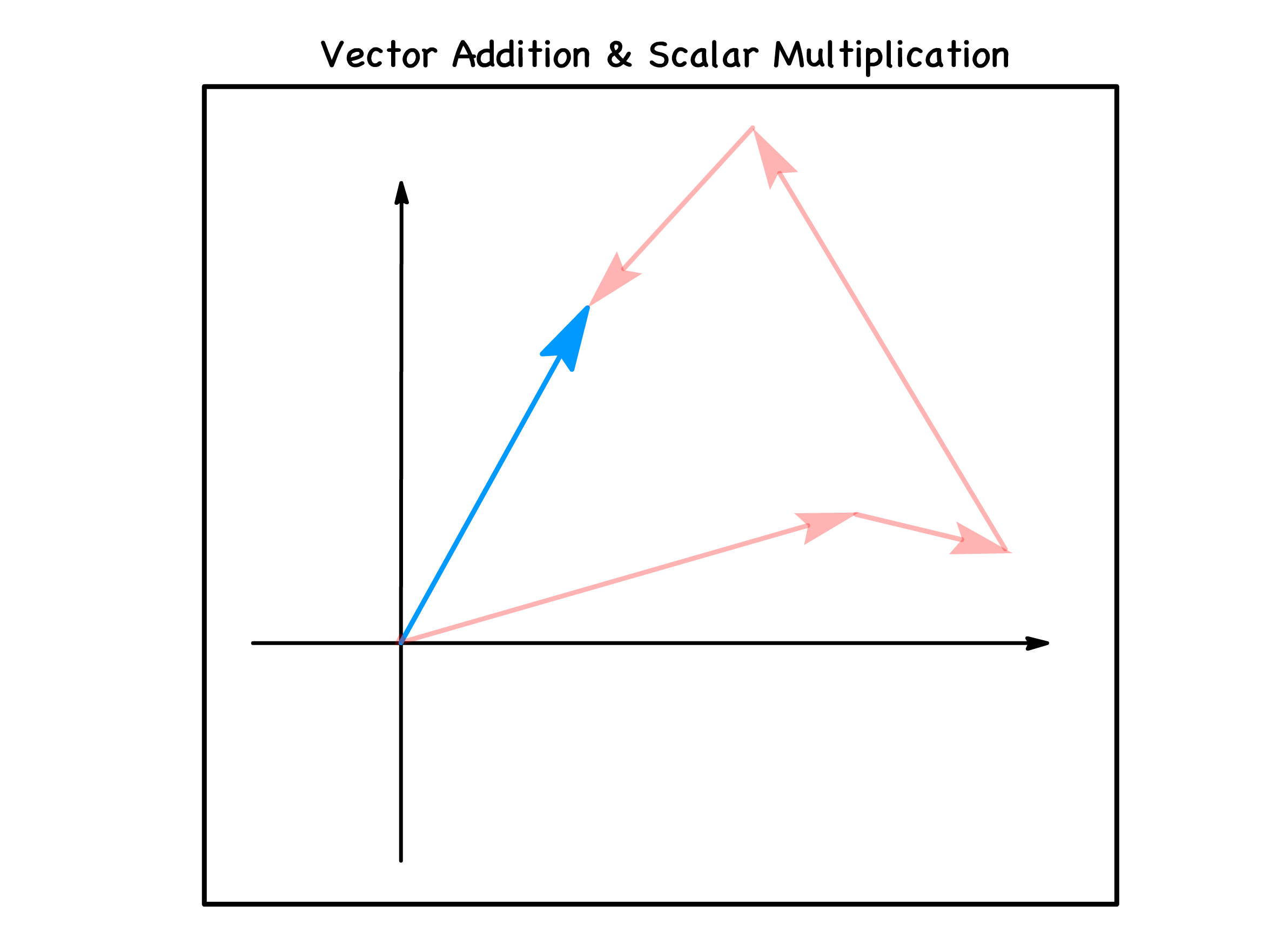

This combination of the two operations is called linear combination

Formally, a linear combination is an operation that produce new vectors by performing vector additions and scalar multiplications simultaneously

- In other words, we are adding together a set of scaled vectors

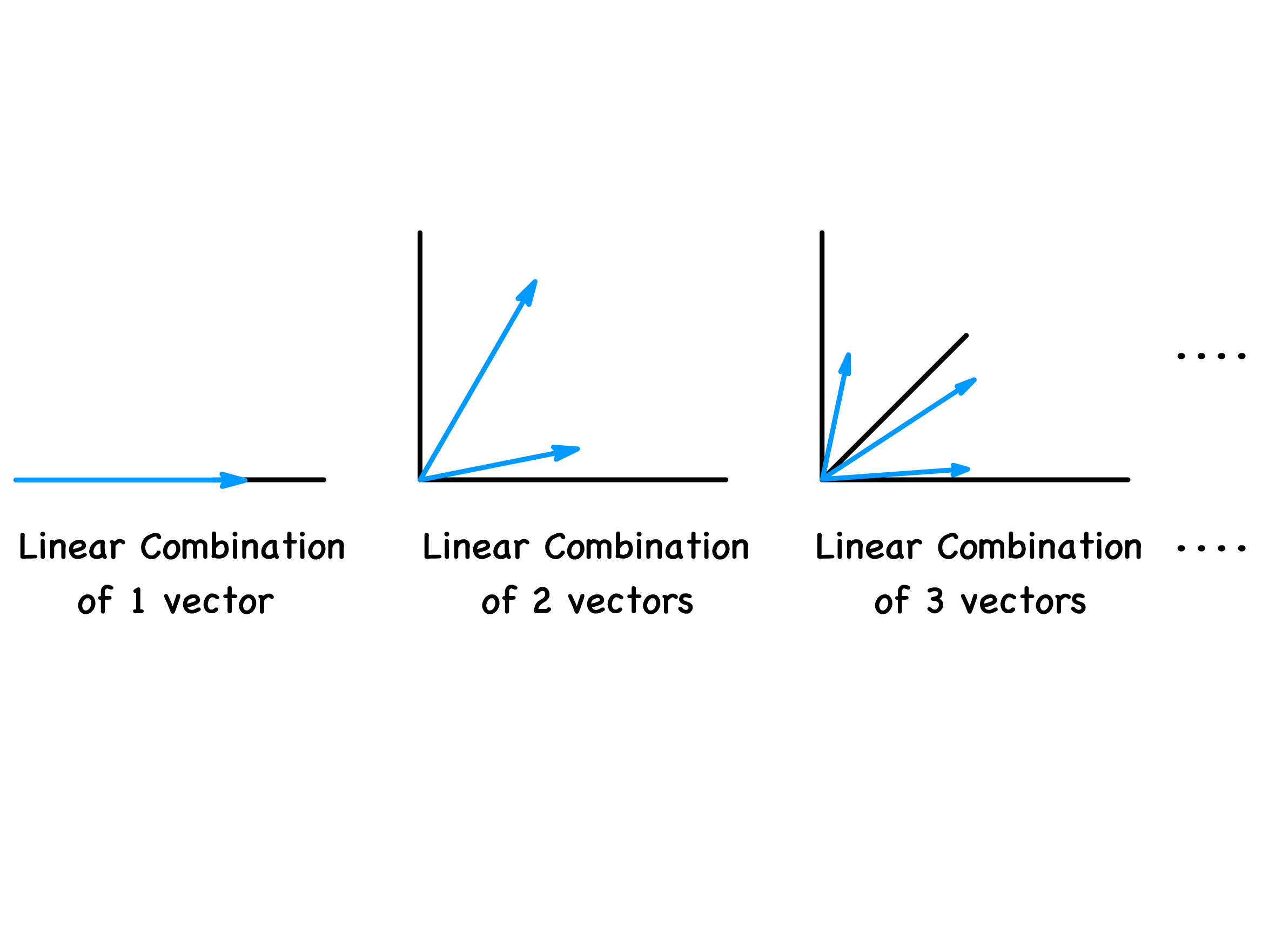

The reason why linear combination is so powerful is due to the fact that it allows us to potentially gain access to an extra dimension of vectors for every extra vector in the set

- In general, it is possible to produce vectors in all n-dimension using a linear combination of n vectors

¶ Linear Dependency

We have established that a linear combination of n vectors can potentially allow us to tap into all n-dimensions

However, there are some conditions that must be fulfilled in order to do so

The limitation stems from the set of vectors we choose to combine. Turns out not any set of vectors can make full use of linear combinations

The set of all possible vectors we can reach with a linear combination of a set of vectors is called the span of the set

The maximum dimension of the span is the total number of vectors in the set

If the dimension of the span is smaller than the total number of vectors in the set, then there must be at least one redundant vector

Redundant vectors can be thought of as members that sits on the span created by the other members of the set

- By virtue of existing in the span, said redundant vectors must therefore be obtainable through the linear combination of the other members of the set

- If there are redundant vectors in a set of n vectors, then the linear combination of those n vectors can only give us access to produce vectors in -dimensions

The property of there exists member(s) in the set that are redundant is called linear dependency

- If there is at least one redundant member in the set, then the set is linearly dependent

- If there is no redundant member in the set, then the set is linearly independent

Assuming there is no null vector in the set, we can define linear dependency as such

- The set of vectors is said to be linearly independent if the equality can only hold true when all the scalars, , are equal to zero

- The set of vectors is said to be linearly dependent, if this equality can be true even when some of the scalars, , are non-zero

Using our definition of linear dependency, we can define the dimension of the space we are working in

- A space has a dimension of n if it can accommodate a maximum of n linearly independent vectors

¶ A Frame of Reference

¶ Basis Vectors

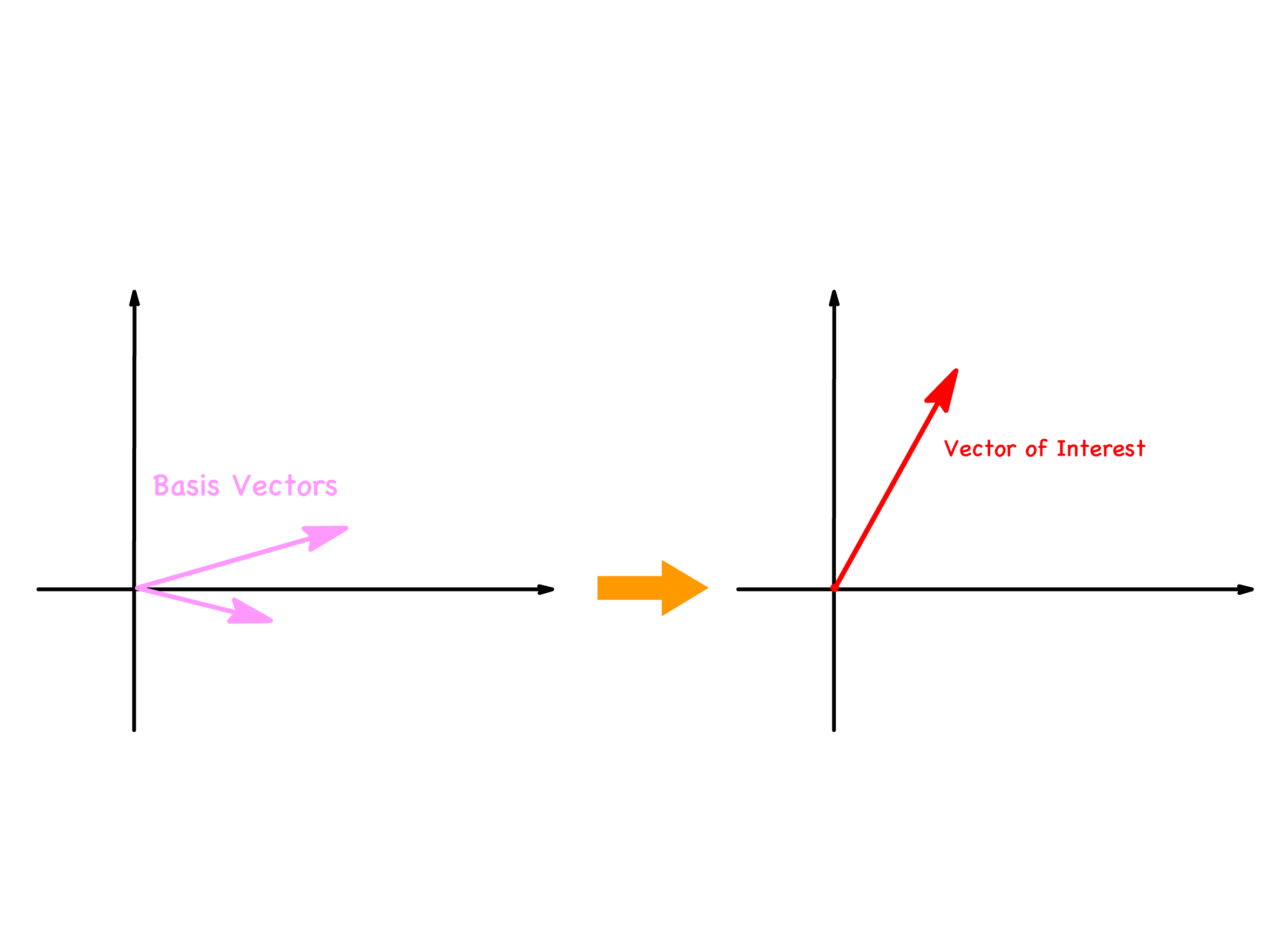

So far in the discussion of vectors, we use their geometry to explain different kind of operations and their relations

- It is very inconvenient to plot out a graph every time we wish to talk about vectors

- We therefore need to formalize a mathematical tool that allows us to describe vectors

We have established that any vector in an n-dimensional space can be written as a linear combination of any set of n linearly independent vectors

- This means that we can choose a set of n linearly independent vectors and express every vector in the space in terms of the basis vectors

- We call the chosen set of vectors as the basis vectors and the scalars as the component in that basis

We can show the process of expressing a vector in terms of a basis geometrically as well

- Suppose we have chosen a basis and we want to express a vector in terms of it

- All we need to do is to scale each of the basis vector by the component and add them together

If we already know what the choice of basis is, we can further simplify the expression of vectors

- Suppose the implicit choice of basis vectors are

- Then the only information relevant for identifying the vector will be the set of components in that basis \

- For the sake of simplicity, we will usually denote the vector as a column of numbers

- It is important to note that this notation only works when the choice of basis has been made clear or heavily implied

A linear combination of n linearly independent vectors allow us to tap into all n-dimensions

- Although vectors themselves are 1-dimensional entities, we usually refer to vectors inhabiting in an n-dimensional space as n-dimensional vectors

- If a vector requires n basis vectors to express it, then said vector is an n-dimensional vector

- The number of entires in the column reflects the number of basis needed, which in turns tell us the dimension of the vector

We can also express the basis vectors in terms of a column of numbers

- In their own basis, each basis vector only has a component of 1 in itself and 0 in all other basis vectors

- When we write them as column vectors in their own basis

- Hence, all basis vector in their own basis must be a column of 0s and 1

- But when the basis vectors are expressed in other basis, that will no longer hold true

¶ Vector Algebra

Having introduced basis vectors, we can perform different kind of computation a lot easier

- We can redefine different vector operations in terms of the component of the chosen basis

We can redefine vector addition

- We first defined vector addition using the tip-to-tail method

- If we were to express the vectors in terms of the basis vectors

- We can see that the vector addition can be represented by the additions of the scaled basis

- Since we are expressing both vectors in the same basis, we can simplify the expression to

- If the choice of basis is implicit, then we can write it as

- We can see that components are added together in vector addition

We can redefine scalar multiplication

- We first defined scalar multiplication as something that changes the length of the vector whilst keeping its orientation

- If we were to express the vectors in terms of the basis vectors

- In order to preserve the orientation of the scaled vector, all the basis vectors are scaled to the same extent

- A simple factorization allows us to simplify the expression

- If the choice of basis is implicit, then we can write it as

¶ Dot Product

Having introduced the two fundamental vector operations, we now proceed to explore an operation that is not fundamental, but extremely useful

- This operation is called the "Dot product" and it is a vector operation that takes in two vectors and output a scalar

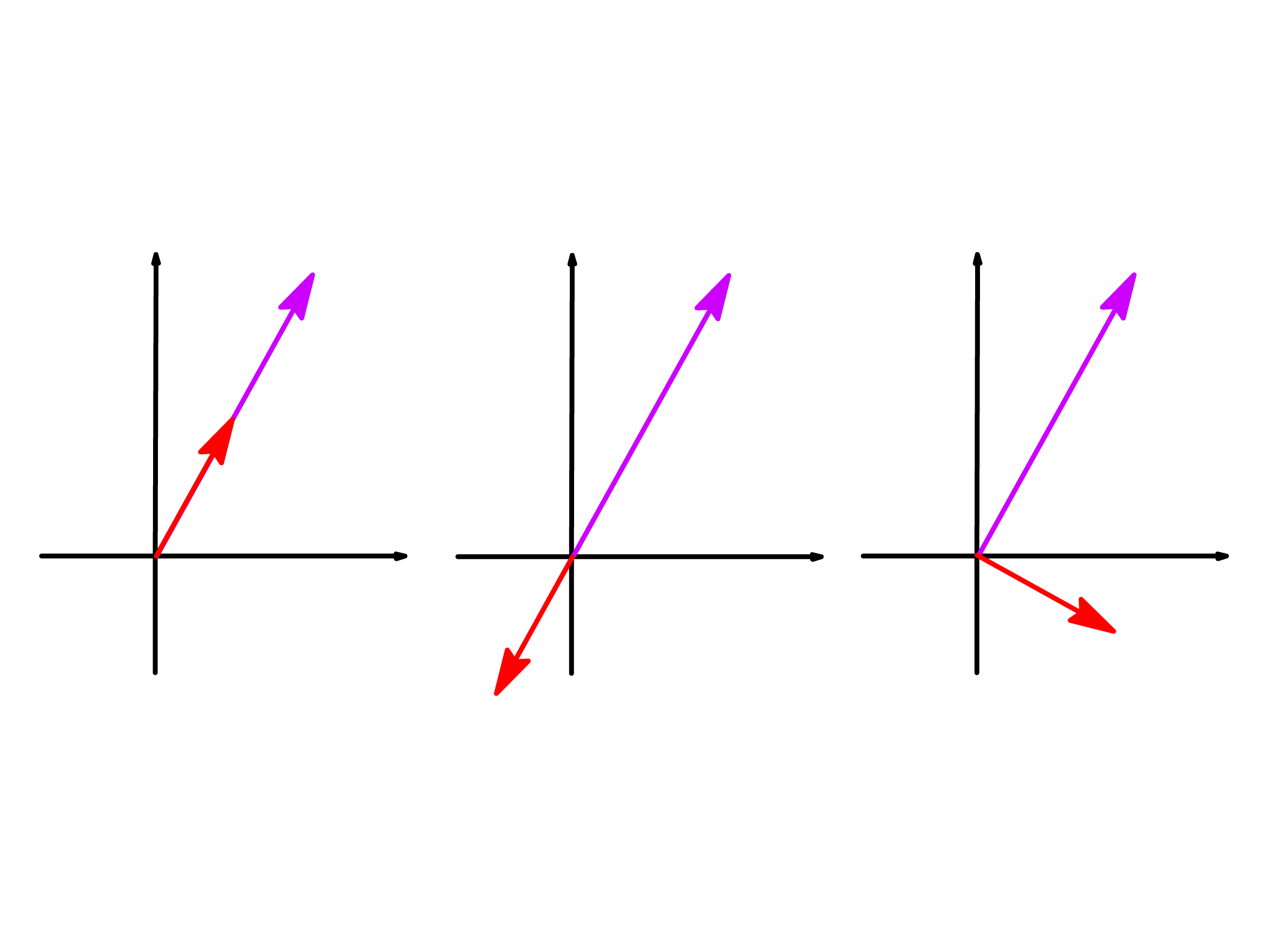

The dot product tells us how much two vectors point in the same direction and there are two factors that will affect the value of the dot product

- The longer the two vectors are, the larger the magnitude of the dot product is

- The length of the vector is given by the magnitude or modulus of the vector,

- The magnitude of the dot product is directly proportional to the product of the length of the vectors

- The more the two vectors points in the same direction, the larger the value of the dot product is

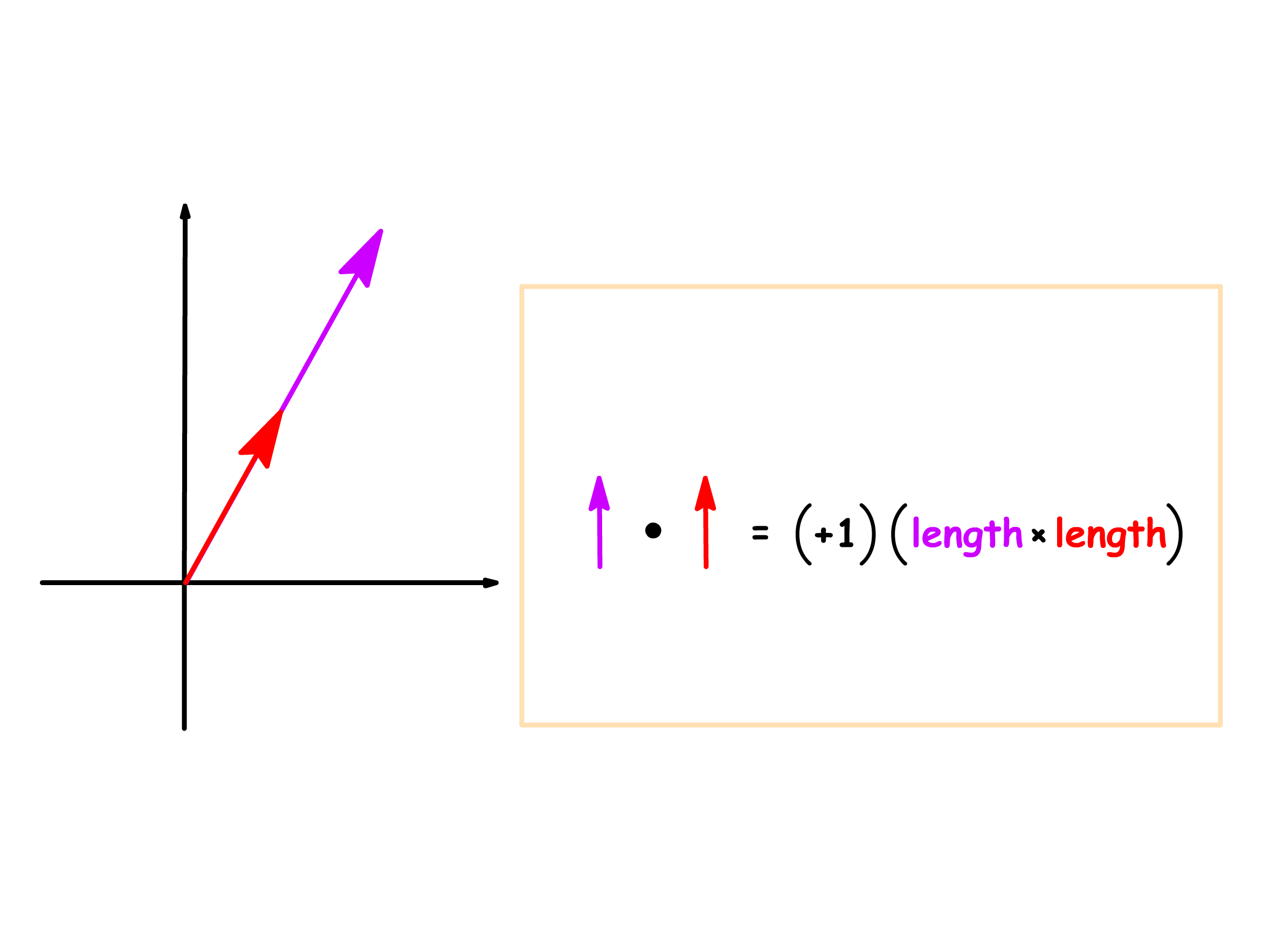

- The dot product is the largest and positive when they are pointing in the same direction

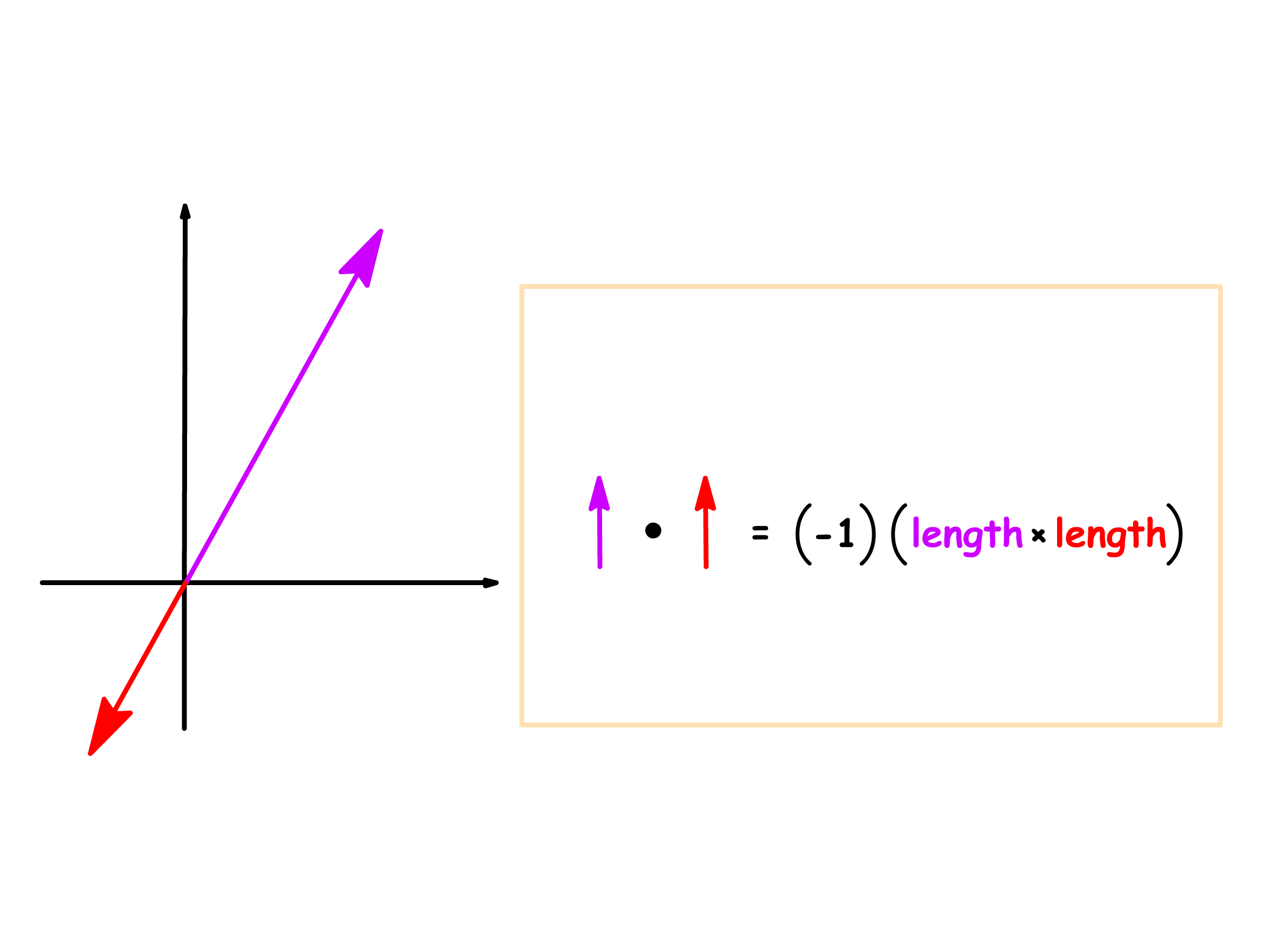

- The dot product is the smallest and negative when they are pointing in opposite directions

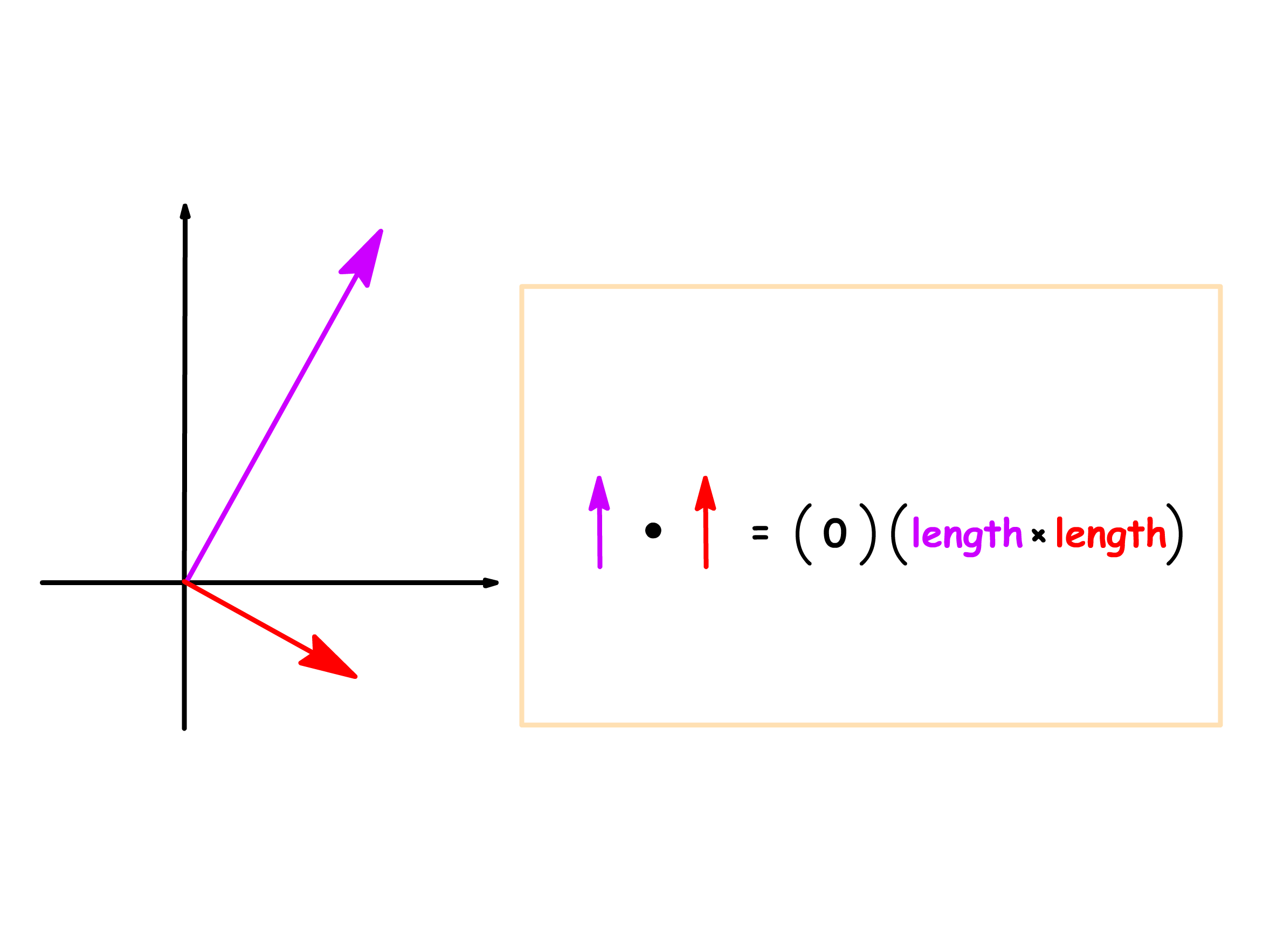

- Between the two extreme where the vectors are perpendicular to each other, the dot product has a value of 0

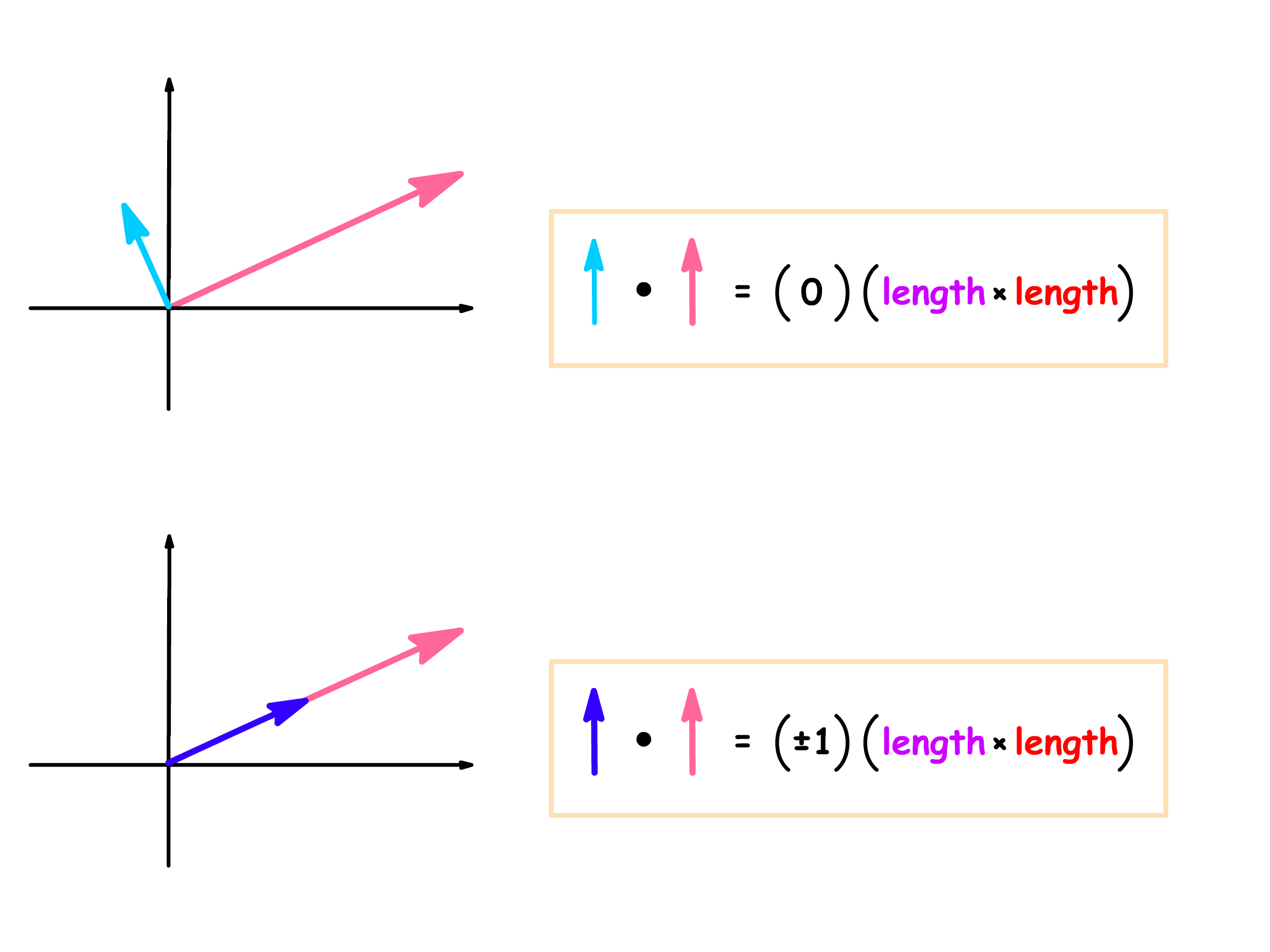

To understand how we can apply the dot product in the general cases, we start by looking at three special cases

- When the two vectors are scalar multiples of each other and are pointing in the same direction

- When the two vectors are scalar multiples of each other and are pointing in the opposite direction

- When the two vectors are perpendicular to each other

Having established the three special cases, we can find the dot product of any two vectors by simply relating them back to those three cases

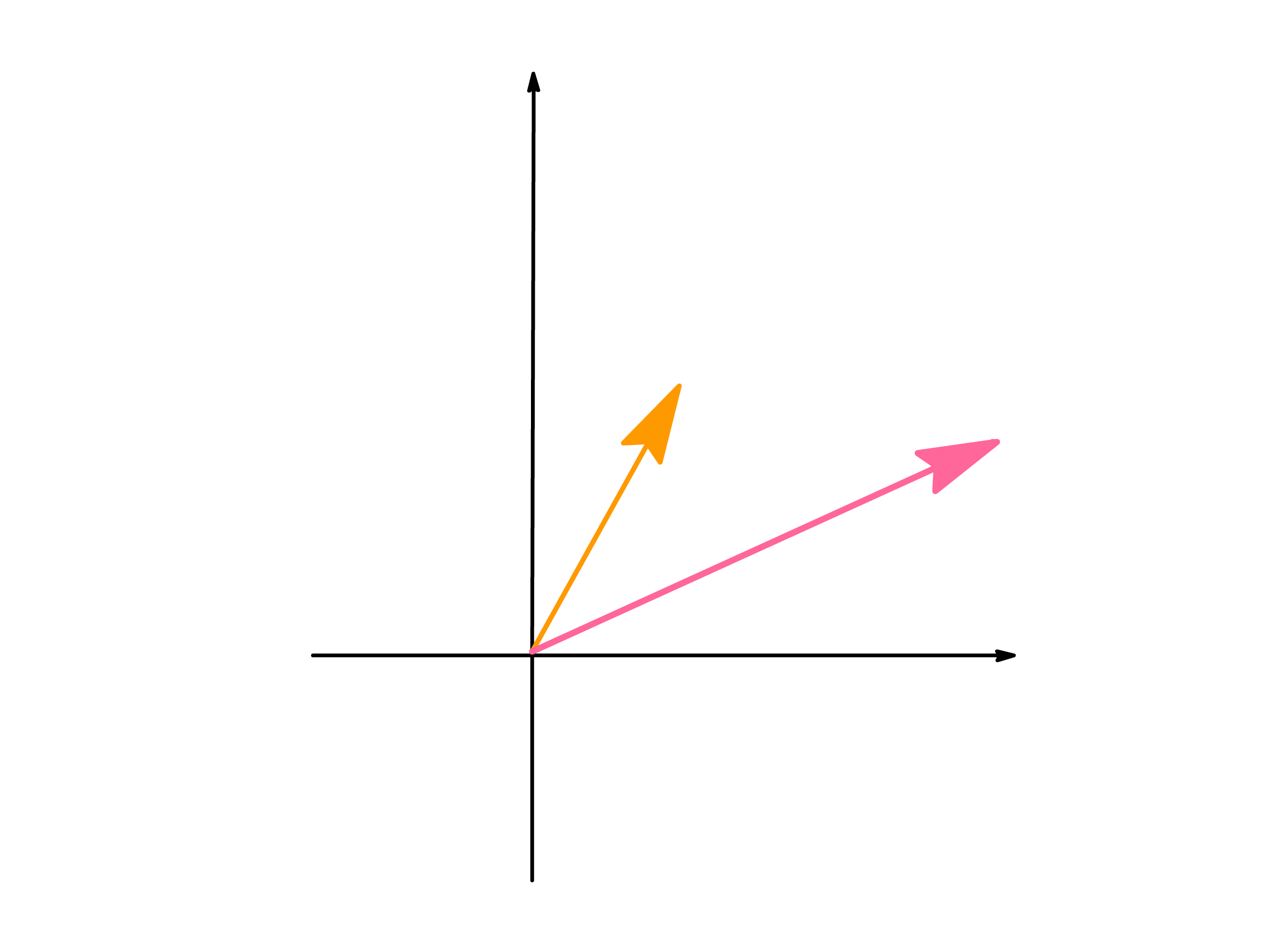

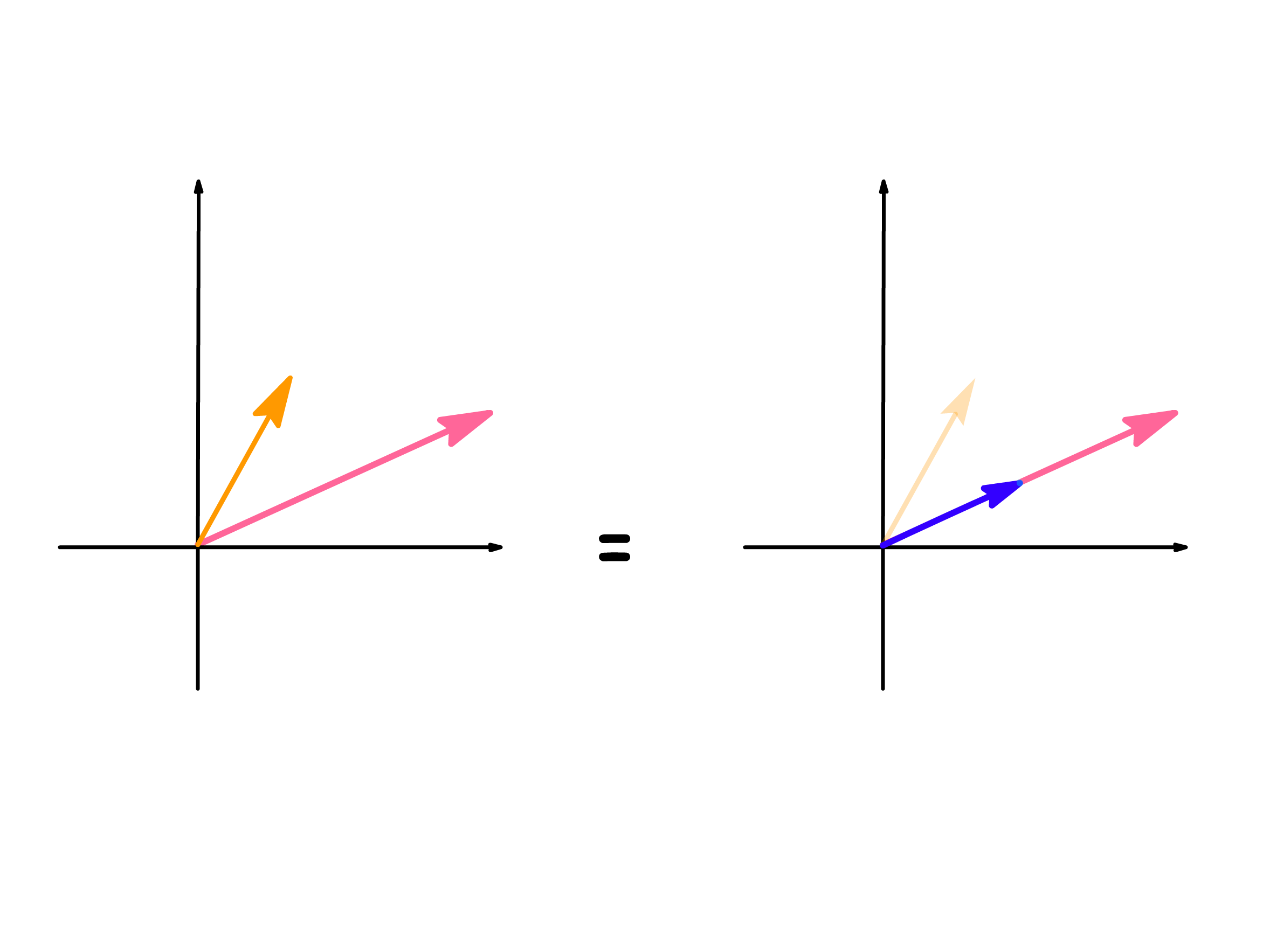

- Most of the time, vectors are not completely aligned or perpendicular to each other

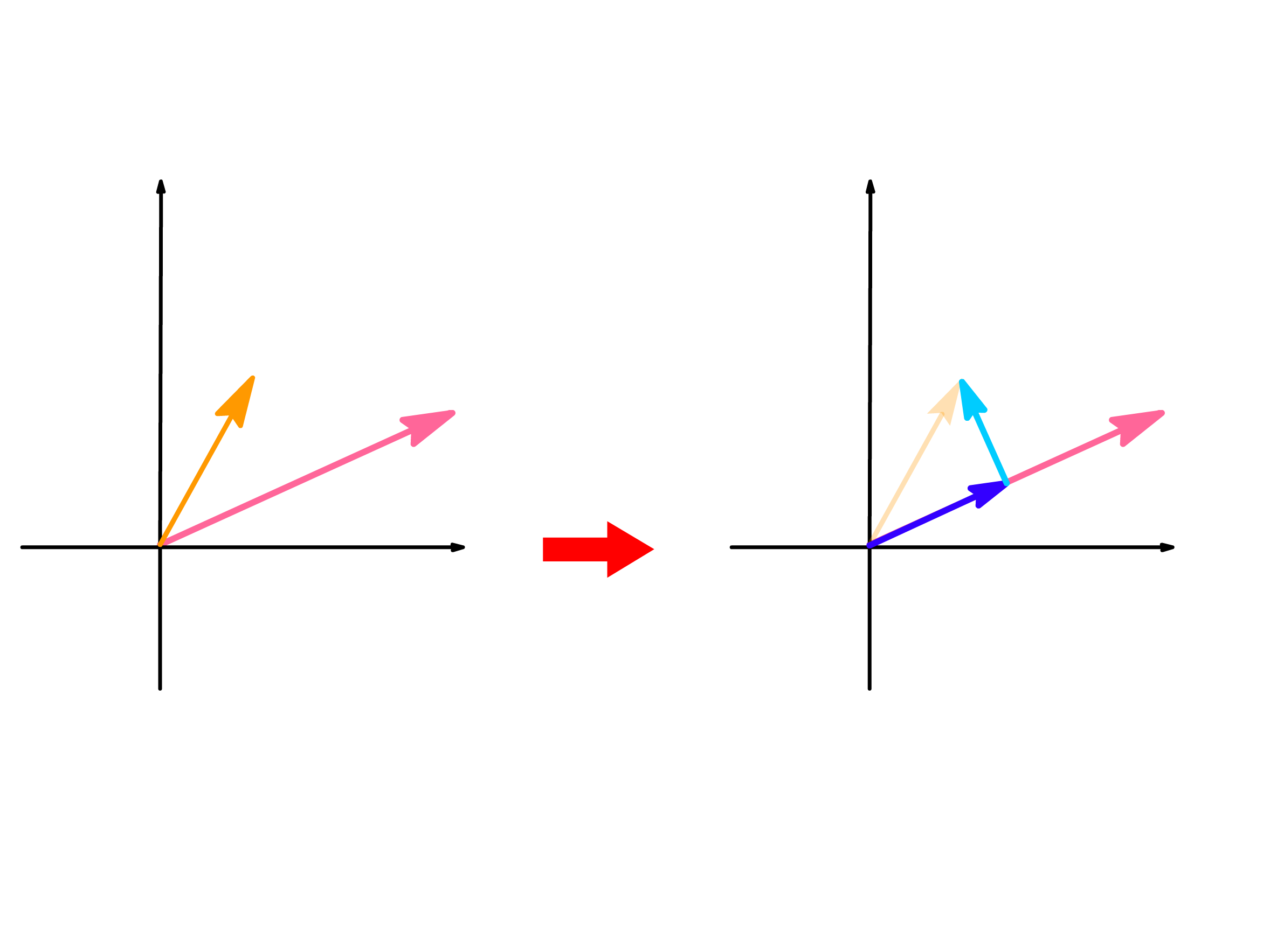

- In these situations, we can split one of the vector into a sum of two vectors, where one of the vector is aligned with the vector we are dotting, and the other vector is perpendicular to the vector we are dotting with

- We then distribute the dot product

- This simplifies the situation into the two of the three special cases

- Since the dot product between the pairs of perpendicular vectors is zero, the overall dot product is just given by the dot product of the pairs of parallel vectors

- This means that the dot product can be found by multiplying the length of one vector by the length of the projection of the other vector on itself

One interesting property arises when we compute the dot product of the same vector

- Since a vector must be pointing in the same direction with itself, the dot product of the same vector amounts to squaring the length of said vector

- In fact, given the dot product, we can find the corresponding length of the vector

- In other words, we can define the length of a vector using dot product

¶ Orthonormal Basis

Just like the previous two vector operations, we can describe the dot product mathematically by using a basis

- Doing so allows us to compute it without having to draw the diagrams

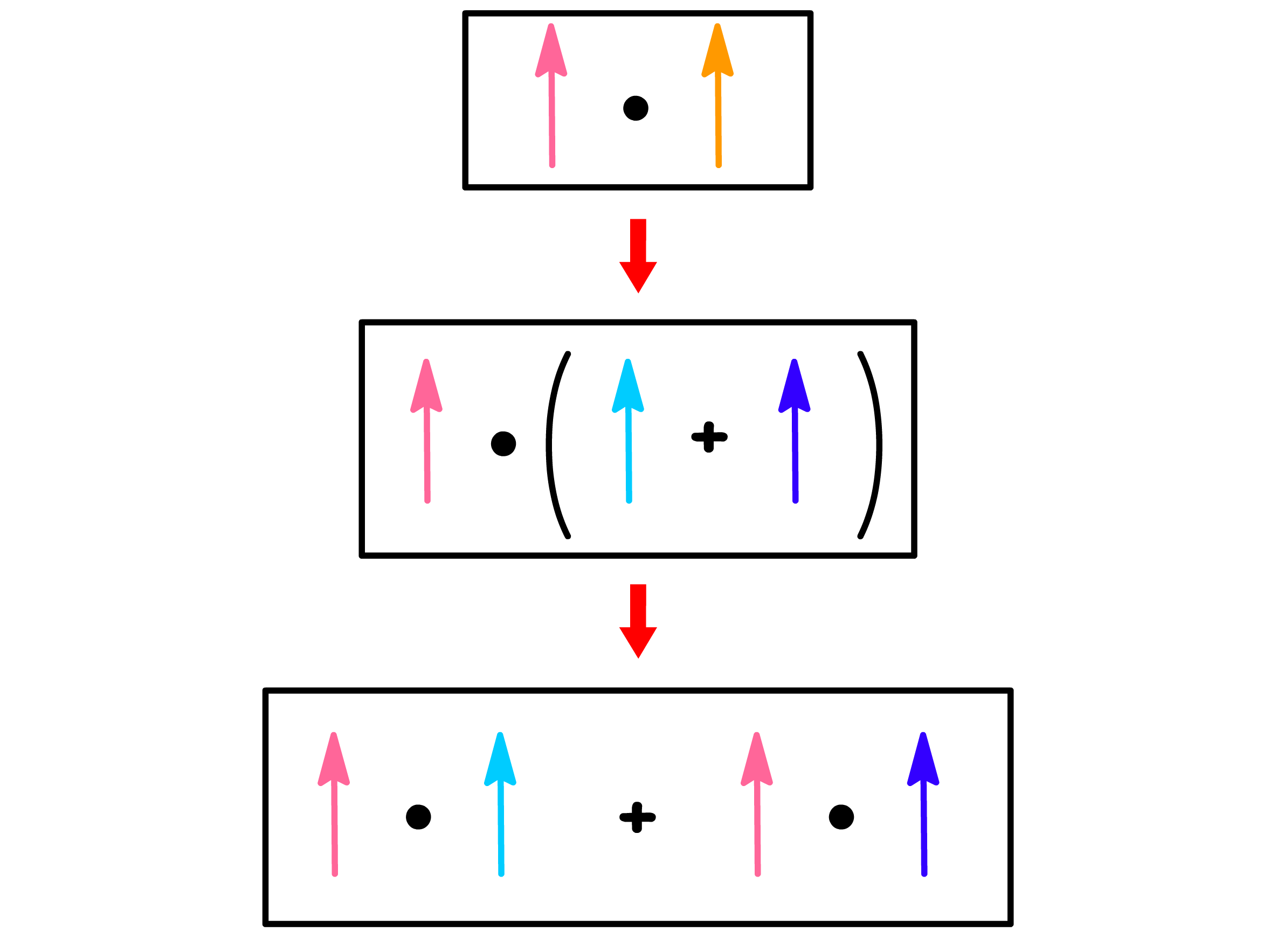

We can define the dot product using basis vectors

- We first expand the two vectors in terms of the same basis

- Distributing the dot product will give us

- Our expansion complicated the situation from determining the dot product of two vectors to determining the dot product between the n basis vectors

The issue with our initial attempt is that we tried to express the dot product in terms of the dot product of basis vectors, but we have no idea what the dot product between the basis vectors should be

- We can improve the expansion if we choose a basis where the dot products between the basis vectors can be known without doing any calculation

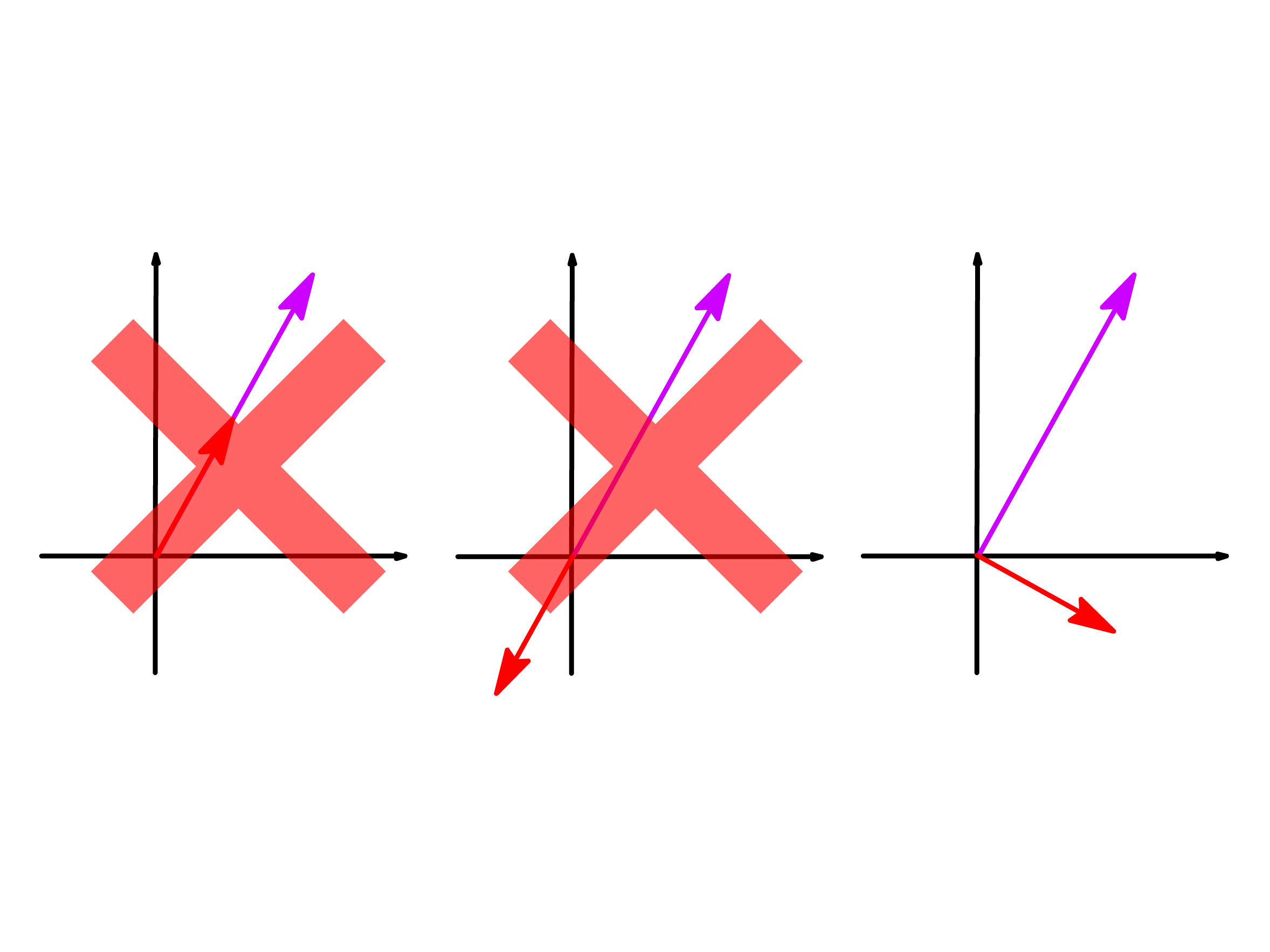

- Any two basis vectors in this chosen basis set must fall into one of the three special cases of dot product

- By definition, a basis set must be linearly independent, so no two basis vectors can be pointing in the same or opposite direction

- This leaves us with choosing a basis set where all the basis vectors are perpendicular to each other

A basis set where all the vectors are perpendicular to each other is called an orthogonal basis

- Despite having the same meaning, we usually use the word, orthogonal, instead of perpendicular in maths

- It should go without saying that the dot product between any of two different orthogonal basis vectors must be 0

- Choosing an orthogonal basis to compute dot product will therefore greatly simplify the expansion

- We have effectively reduce the number of terms from to

- Since a vector must be pointing in the same direction with itself, the dot product of the same vector amounts to squaring the length of said vector

- Hence, when the length of each basis is known, the dot product between the two vectors will also be known

We can be even more ambitious and choose a basis that simplifies the above expression even further by controlling the length of the basis vectors as well

- To make all the disaapear, we simply have to choose orthogonal basis vectors with a length of 1

- This choice simplifies the computation to one where we simply have to add and multiply the corresponding components of the two vectors

- The basis set where all the basis vectors are not only orthogonal to each other, but also of a length of 1, is called an orthonormal basis

An orthonormal basis is far too convenient to use, so in most situations, it is the default choice of basis

- Hence, whenever a vector is presented in its column form without specifying the basis, we can be quite sure that the implied basis is an orthonormal one

- In fact, we usually express the dot product in an orthonormal basis using the column vectors